Purpose-built compute technology that has sensory input to accurate perception processing provides a path forward, addressing concerns around latency, jitter, and power consumption.

Contributed by Recogni

Although it may not be the first technology that comes to mind when thinking about autonomous vehicles (AVs), compute plays a critical role. To understand the role of compute, we need to understand the fundamental prerequisite for a vehicle to navigate in a three-dimensional world, which is close to perfect perception.

What exactly does “close to perfect” mean? For starters, it means the ability to perceive in all directions around the vehicle. This multidirectional perception is important for several reasons. Take the example of an 18-wheeler truck cruising along on the highway. That truck could easily run into a small subcompact car sitting in its blind spot as it does a lane change.

What exactly does “close to perfect” mean? For starters, it means the ability to perceive in all directions around the vehicle. This multidirectional perception is important for several reasons. Take the example of an 18-wheeler truck cruising along on the highway. That truck could easily run into a small subcompact car sitting in its blind spot as it does a lane change.

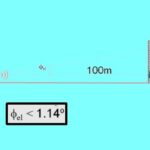

Likewise, a vehicle coming to a four-way stop sign must see to the right and left for a hundred meters or more in case driver runs through the intersection without stopping. Similarly, it is crucial to see a child running into the street. Moreover, AV perception must be close to flawless under all weather conditions. Rain, sleet, snow, slippery roads, and other elements give vehicles less stopping distance to work with.

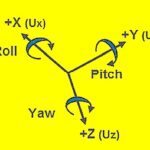

To help give vehicles the “close to perfect” perception, automakers have provided an array of sensory functions that include radar, lidar, and several sets of cameras. Typically, radar and lidar components function as redundant “second sets of eyes” to verify what the cameras see. However, the higher the resolution of the camera video makes it easier for the vehicle to identify objects, minimizing the need for use of disparate sensors. The vehicle crunches the incoming visual data to determine what is in its view, comparing images it’s ingesting to what its algorithms have been trained to recognize. With perception processing complete, the higher level AV systems like motion control turn the steering wheel to steer away from a fallen branch or press the brake to avoid hitting the car in front of you. This chain of events – collection of visual data, perception processing, and then motion control – illustrates why high compute in a deterministic way is critical for autonomy.

For example, consider a set of eight 8 MP cameras positioned around a vehicle capturing the scene at 30 fps. They will generate 6.9 petabytes of data hourly. Lidar sampling rates are on the order of 1.5 million data points/sec. One radar sensor samples at an effective rate of 20 MS/sec over a 10 msec measurement time with a 50-msec cycle time. If the ADC is at 12 bits/sample, a quick calculation gives 1.2 MB per measurement and a 24 MB/sec data rate for four radar receive channels.

There is no way that flood of data can be shipped to the cloud, processed, and then sent back to the vehicle fast enough to avoid hitting a pedestrian. That compute must take place locally so that the vehicle can comprehend its world nearly instantaneously and then perform whatever evasive actions might be necessary. In this regard, autonomous systems must overcome three hurdles in particular: latency, jitter, and power consumption.

Latency

Consider the video stream in autonomous driving scenarios. The size of the video frames can bring vehicle data networks to their knees. Video compression can reduce the size of the

original stream, but this process introduces significant overhead in the form of video latency, the time it takes for a data packet to travel across the network. Moreover, compression is a time-consuming process that makes heavy use of CPU resources. The protocol used (RTMP, RTSP, and HTTP) to convey the video stream over the network entails a muxing step that adds overhead to the streaming latency. Additionally, it takes time for the video data to flow to its destination and be decoded at the player side. All these factors together create a so-called glass-to-glass latency. The amount of latency depends on many factors such as the hardware specifications, the available network bandwidth, and the technologies employed. This latency must be minimized throughout the streaming session.

Jitter

Whereas latency arises from delays via the network, jitter is defined as a change in the amount of latency. In the AV world, for example, it might take frame 1 from a camera 20 msec to traverse a network while frame 92 takes 250 msec and frame 100, 5 msec. The problem with jitter is that too much of it runs the risk of causing delays that exceed the worst-case data transmission delay which the AV system is designed to handle. This can become a safety issue for the vehicle control system.

Power consumption

Consider an EV with a 60 kW-h battery used for six hours during a daily commute. If compute consumes 1 kW/h, six hours of driving consumes 6 kW (or, 6 kW-h). In other words, compute consumes 10% of the car’s energy. Any watt of energy that computing facilities consume is one watt unavailable for putting the EV in motion, thus reducing the driving range. Now consider a computational platform that only consumes 200 W/h. Here six hours of driving will only consume 1.2 kW – just 2% of the car’s energy. The impact on the car’s range is obvious.

Power-efficient computing is particularly important because compute demand rises significantly for higher camera resolution and video frame rates. Thus the ability to resolve objects at long distances in camera images has surprisingly far-reaching consequences.

It’s increasingly clear that legacy compute technology won’t cut it in AVs. Historically, autonomous vehicles have drawn upon CPUs and GPUs originally designed for other purposes. The problem is that these chips carry out their compute duties with latency and jitter that isn’t acceptable in AV scenarios, and they consume appreciable power. A better approach is purpose-built chip technology designed specifically for the AV use case. Such a chip would need to provide object detection accuracy up to 1 km in real-time under various road and environment conditions while processing multiple streams of ultra-high resolution and high-frame-rate cameras.

At 30 fps, a new piece of camera data comes in every 33 msec. At 60 fps, information comes in every 16 msec. At 120 fps, information arrives every 8 msec. From last-pixel-out to perception results, processing should take less than 10 msec. This interval gives the vehicle ample reaction time to navigate safely, but it requires Peta-Op class (a quadrillion deep learning operations for 1 kilowatt) inference.

Meanwhile, low power consumption can only be realized through a combination of novel power-efficient logarithmic computation; a highly optimized acceleration engine particularly for 3×3 convolutions (Briefly, a convolution matrix is a small matrix used for edge detection, among other things. Detection takes place via a convolution between a kernel and an image. 3D convolutions apply a 3D filter to the data such that the filter moves in three directions to calculate the low-level feature representations. Their output shape is a 3D volume space such as a cube or cuboid.); a novel compression scheme (generally using statistical qualities of the image) to reduce memory data transfers; and minimized DRAM accesses. These are not off-the-shelf capabilities with legacy chips.

We are now at an inflection point where further advances in AV technology will only come by solving fundamental constraints around compute. Purpose-built compute technology that addresses concerns about latency, jitter, and power consumption can kick AV efforts into high gear.