Newer fabric network input/output (I/O) cables and connectors support higher speeds, reduce latency, and support the latest interfaces — including the IEEE-802.3df Ethernet 200G per-lane specification project and modified versions, such as the RoCE and iWARP Ethernet.

Other new cable and connector developments are working to include standard InfiniBand XDR 200G and modified versions, such as Altos’ BIX and Cornelis’ Omni-Path Express.

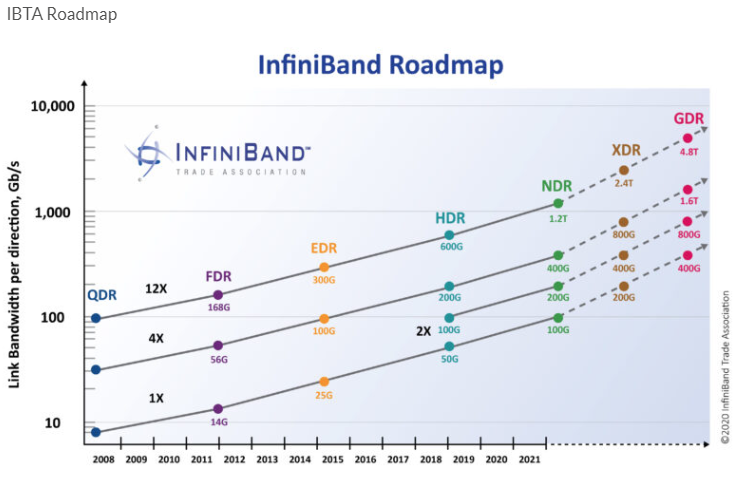

End-user demands are also pushing the InfiniBand Trade Associations (IBTA) to research GDR 400G per-lane optical links. IBTA works to maintain and further the InfiniBand Architecture specification defining hardware transport protocols to support reliable messaging (send/receive) and memory manipulation semantics without software intervention.

For example, this IBTA roadmap is likely to get tweaked later this year with the addition of new target dates.

The high-performance cable (HBC) market continues to expand with new products and developments, including:

- NDR 100G link applications

- 3ck 106G per-lane Ethernet products

- 1X link-use SFP (small form-factor pluggable) cables and connectors

- 2X link-use SFP-DD (double-density) or DSFP (dual small form-factor pluggable) cables and connectors

- 4X link-use QSFP (quad small form-factor pluggable) and OSFP (octal small form-factor pluggable) interconnects

- 12X link-use CXP interconnects

The QSFP-DD and OSFP-XD interconnects can be used with multi-port 4 and 8-legged, fan-out cable assemblies for switch-to-switch applications. So far, the 8X and 10X-lane implementations have also experienced some demand.

The use of larger, pluggable interconnects has led to a decrease in the port count available on a one rack-unit (RU) faceplate. However, depending on the network device, this is not always ideal. Several OEMs and end-users have been searching for new solutions to this low-port density problem.

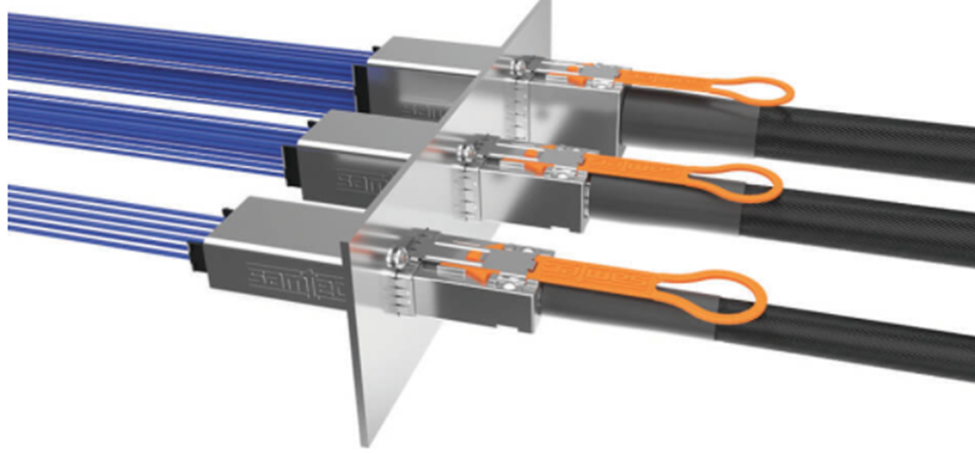

One option offering a higher circuit count and greater port density is Samtec’s Front Panel I/O NovaRay connector and cable family. It currently provides various lane and form factors for the 112G per-lane, copper-cable internal and external links — with a 224G version likely already in development. It’s possible that it could work for passive and active copper cables that have different Flyover styles.

Newer 112G and developing 224G backplane twin-axial cabling solutions are advancing to support internal and external fabric applications. The external versions typically use custom metal plug shells that mate with metal sockets or receptacles.

An example is Samtec’s internal ExaMAX2 cable.

New optical connector

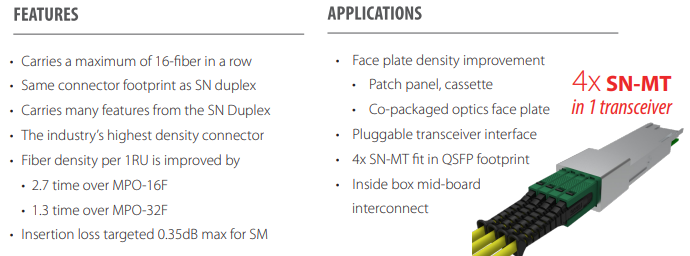

Despite demands for increased faceplate port densities, higher lane counts, and greater capacity radix switch topologies, there’s also a growing interest in smaller connector solutions. Take Senko’s SN-MT multi-fiber push-on (MPO) connector as an example. It offers a 2.7X density increase compared to the conventional MPO- 16F and it has the same footprint as an SN duplex.

This connector family includes Bare Ribbon, Behind the Wall, Shuttered, and Mid-Board configuration types. Its features are described below.

Other network equipment developers are solving the port density and lane size issues by employing Single Lambda’s single-mode wavelengths in 100G and 200G. The 400G per fiber in pluggable AOCs, AOMs, and front-panel faceplates are under development. The IEEE-802.3cu spec supports Single Lambda’s 100G and 200G applications.

Trends

The demand for higher port densities and lane counts is primarily driven by fabric, accelerator, storage, system bus, telecom, and other network segment applications. A few other fabric-network interfaces that are offering new optical connectors with advanced features are NVMe, GenZ, CXL, FibreChannel 224G, OIF 224G, NumaLink 8/9, and TOFU 4.0 interconnects. Additionally, the IPEC consortium has started a new 224G per lane project.

What’s more: updated mass-fusion, multi-fiber termination equipment is facilitating higher-quality and more cost-effective optical connections. The benefit is that optical fiber cables are more flexible, easier to route, weigh less, and allow for better airflow. The CPO internal interconnect implementations typically use external, passive optical cables on the faceplate.

A new class of fabric DC network connectors and cables are also in development to support connectivity in liquid immersed, cryogenic, and quantum computing systems. Although optical connections would offer advantages over copper connections, most of the cables are coaxial and twin-axial. So, it will be interesting to review the I/O signaling channel parameters and measurements for these applications.

The Defense Advanced Research Projects Agency’s (DARPA) requirements focus on 8 and 16-lane, per-cable interface applications. Currently, several I/O interface standards groups are in discussions about the 200G and 400G per-lane connector and cabling specification parameters, measurement methods media types, connector-cabling implementation, and compliance-interoperability plugfests.

Final thoughts

Much like any technology, cable and connector options have evolved over the last 20 years. Where the ideal was once using VHDCI connectors with 34TP or 68-wire conductor cable for applications, such as Wide Parallel UltraSCSI, it shifted to using much smaller 1X and 4X high-speed copper cabling (HSSDC) options.

Over time, slightly larger SFP/QSFP connectors with 2 or 8 twin-axial cabling were preferred for their higher-performance and lower-latency in conjunction with the more narrow, serial FibreChannel and InfiniBand interconnects.

Somewhat ironically, today’s OSFP-XD connectors are about the same form-factor size as older VHDCI connectors. The advanced fabric I/O interfaces require larger OSFP-XD cables and offer 32 twin-axial or 64 wire conductors sometimes referred to as wide serial interface solutions. The cable and connector size is similar to what it was a couple of decades ago.

However, smaller pluggable options are necessary for the copper centric systems. This is where the Samtec NovaRay Front Panel I/O connector and the Senko SN-MT connectors are likely to impact the market, supporting higher-density link/port fabric network architectures. Smaller form-factors and lighter options are ideal for high-volume automated manufacturing.

In regard to the lane options, Trunking, Multi-Trunking, Link Aggregation 802.3ah, and multiple wavelength Lambda streams have been employing lanes in one fiber. It’s expected that multi-core or multi-hollow fiber will be in demand in the future. IB NDR WAN long-haul interconnect products, developed by Nvidia/Mellanox, work well with the growing HPC fabric topologies.