Communication systems such as 5G that operate at today’s wide bandwidths and high orders of modulation need to maintain proper SNR and EMV. Path loss and phase noise can get in the way.

Our insatiable hunger for available spectrum has pushed wireless communications technologies to utilize higher frequency bands. In satellite communications, for example, where the International Telecommunication Union (ITU) allocates the 71 to 76 GHz / 81 to 86 GHz segment of the W band to satellite services, commercial satellites with W-band radio transmitters have been in play since the first launch in June 2021. In cellular communications, the 3rd Generation Partnership Project (3GPP) Release 17, completed in the summer of 2020, extended the range of 5G New Radio (NR) with the FR2-2 band, which covers 52.6 GHz to 71 GHz.

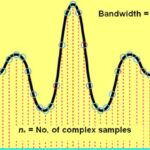

At millimeter wave (mmWave) frequencies, there is more available bandwidth. Both the throughput and latency of cellular communications improve at wider bandwidths. At the same time, the higher-order modulation schemes used in 5G such as orthogonal frequency division multiplexing (OFDM), quadrature phase shift keying (QPSK), and quadrature amplitude modulation (QAM) — which require closer symbols — achieve faster data rates without increasing signal bandwidth.

Both wider bandwidths and higher-order modulation schemes increase throughput. Both, however, also have a serious drawback: the introduction of more noise that degrades system performance. Wider bandwidths also introduce other design and test challenges including path loss, frequency response, and phase noise.

The more noise, the lower the signal-to-noise ratio (SNR) of the communication system. To maintain a proper SNR and thus sustainable communication links, it is critical that cellular networks and devices transmit a high-quality signal and reduce system noise. Achieving acceptable SNR demands accurate characterization of each component of the system.

Massive MIMO

In addition to the challenges outlined above, another aspect of 5G NR that complicates matters for test and measurement is the use of massive multiple-input/multiple-output (MIMO) technology to increase spectral efficiency by enabling use of narrower, more focused antenna beams. The incorporation of massive MIMO and associated multi-antenna techniques such as spatial diversity, spatial multiplexing, and beamforming (shown in Figure 1) in 5G NR can improve both SNR and data throughput.

Spatial diversity — a technique that consists of sending multiple copies of the same signal via multiple antennas — improves signal reliability by increasing the chances of it being properly received. Spatial multiplexing, meanwhile, increases overall data capacity by allowing the transmission of independent streams over multiple channels. Both techniques are critical to 5G’s promise of higher reliability, faster transmission speeds, and ultra-low latency.

5G NR specifies eight spatial streams for Frequency Range 1 (FR1) to improve spectral efficiency without increasing signal bandwidth. 3GPP’s Technical Specification (TS) 38.141-1 defines performance tests with multiple spatial streams for 5G NR base stations. These tests require up to two transmitter antennas and eight receiver antennas. Each test case applies specific propagation conditions, correlation matrix, and SNR.

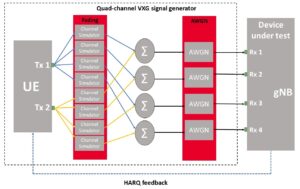

Figure 2. This 5G base station MIMO test configuration for two transmitter antennas and four receiver antennas uses hybrid automatic repeat request (HARQ) feedback.

Testing massive MIMO antenna arrays and systems that use spatial diversity, spatial multiplexing, and other multi-antenna techniques requires multichannel signals with stable phase relationships between them. Commercial signal generators use independent synthesizers to upconvert an intermediate frequency (IF) signal to an RF signal. Precise timing synchronization between channels is required to simulate multichannel test signals, as shown in Figure 2.

Path Loss

In addition to the previous parameters, excessive path loss at mmWave frequencies can limit the power of the RF signal and make the required over-the-air (OTA) testing more challenging. (The components designed for mmWave devices are compact and highly integrated with no place to probe, requiring the use of OTA tests).

Path loss grows more significant at higher frequencies due to the nature of radiated waves. While the far-field region of a 4G LTE 15 cm device operating at 2 GHz starts at 0.3 m and has a path loss of 28 dB, the far-field region of a 5G NR device running at 28 GHz has a far-field distance of 4.2 m and a path loss of 73 dB.

Because traditional OTA methods would result in an excessively large far-field test chamber and a path loss that is too great to make accurate and repeatable measurements at mmWave frequencies, 3GPP approved an indirect far-field (IFF) test method based on a compact antenna test range (CATR) to overcome the path loss and excessive far-field distance issues. Using the IFF test method, a CATR with a parabolic reflector is used to collimate the signals transmitted by the probe antenna and create a far-field test environment. This method provides a much shorter distance and with less path loss than the direct far-field method for measuring mmWave devices.

Excessive path loss between the instruments and the device under test (DUT) at mmWave frequencies reduces the SNR, making measurements such as error vector magnitude (EVM), adjacent channel power (ACP), and spurious emissions more challenging.

To compensate for path loss and improve the SNR, engineers typically reduce the signal analyzer’s output signal with an attenuator. Even with the attenuation set to 0 dB, the SNR can still be too low for accurate signal analysis. Minimizing possible path loss as much as possible is critical for mmWave testing.

Figure 3. Phase noise in any local oscillator (LO) in the transmitter will transfer the phase error to the output-modulated signal (the figure shows a QPSK-modulated signal as an example). When the signal is demodulated, the measured symbols (shown as green dots) vary in “phase” around the ideal symbol reference point, resulting in poor signal quality.

Phase noise

Accurate EVM measurement depends on several factors, including the impact of phase noise. Phase noise is a random phase error of a carrier signal. It is commonly defined as the ratio of the power density at an offset frequency from the carrier to the total power of the carrier signal. Like many other things, phase noise is a more significant problem at mmWave frequencies because operating at higher frequencies increases noise power spectral density, thus decreasing SNR and making it more difficult to perform accurate EVM measurements (Figure 3).

In addition, similar to overcoming path loss, minimizing the impact of phase noise depends on using the optimum levels for a signal analyzer’s mixer and digitizer. It is also critical to choose the optimum phase noise configuration of the local oscillator (LO) to achieve the best results.

While wireless standards specify transmitter measurements at the maximum output power, it is possible to attenuate the power level of a signal analyzer to ensure that the high-power input signal does not distort the signal analyzer. For example, the input signal level can be lower than the optimum mixer level in OTA tests and tests setups with a significant insertion loss.

Optimal setting of the signal analyzer’s input mixer requires consideration of distortion performance and noise sensitivity. The higher the input mixer level, the better the SNR. The lower the input mixer level, the better the distortion performance. Applying an external low-noise amplifier (LNA) at the front end can also reduce the system noise figure, minimizing phase noise.

Toward a wider bandwidth

The migration to higher frequencies and wider bandwidths will continue. Beyond 5G, the next-generation cellular communications technology is likely to utilize terahertz frequencies, a significant increase in bandwidth, and even more complex modulation. Overcoming these challenges moving forward will require well-thought-out test strategies and methodologies.