The Internet of Things (IoT) continues its remarkable growth, and although early estimates were overly optimistic, there are still several credible projections in the range of 25 to 30 billion devices by 2020. Despite the improvements in energy harvesting technologies, most IoT devices use battery power. As applications become more demanding and customer expectations increase, IoT device manufacturers are challenged to make the right battery design decisions to ensure commercial success.

For example, consider wearable IoT devices, such as smart watches, fitness trackers, virtual reality displays, digital corrective eyewear, and even “smart” socks and shirts for athletes. In these applications, long battery life is a competitive differentiator, as users prefer not to have to charge batteries frequently. In other contexts, such as smart city and agriculture applications, the cost of getting to a remote sensor to change a battery is often much higher than the cost of the battery itself. In medical IoT devices, especially those implanted in the human body, a battery replacement may cost tens of thousands of dollars, and the cost of a failed battery may go well beyond mere monetary considerations.

Factors to Consider

There are many factors to consider when selecting a battery for an IoT device. The most obvious are electrical requirements of the device that the battery will power. These, along with the physical characteristics of the battery, must be considered in the environmental and electromagnetic context in which the device will operate. Finally, there are various costs and business considerations that will likely influence battery selection.

The primary consideration, of course, is that the battery must meet electrical requirements, such as the nominal voltage of the device and the total battery capacity, which is usually specified in amp-hours (Ah) or watt-hours (Wh). These values must be considered in light of the application’s physical environment, because different battery technologies have different capacity de-rating curves. For example, one particular 2200 mAh lithium ion battery de-rates its capacity by 6 percent at 0 degrees C, 17 percent at -10 degrees C, and nearly 60 percent at -20 degrees C. In contrast, the rated discharge time under a constant load for a particular manganese lithium coin cell is only 5 percent less than the room temperature rating (23 degrees C). Furthermore, different battery technologies de-rate their capacities differently depending on humidity.

The second characteristic is the battery’s ability to be charged and recharged. How many times can it be recharged? How fast does it take? How does capacity de-rate over time? How well does it retain charge in storage? How does it respond to multiple incremental trickle charges as opposed to complete recharges?

You should also consider the battery’s cut-off voltage, because your IoT device may need to implement cut-off circuitry to predict and deal with this end-of-life condition. For example, you may include a feature in your device that sends a “battery nearing end-of-life” indication when the battery’s voltage reaches a certain level. In critical applications, it may be important to use the last few microcoulombs of battery life to transmit important information.

The battery’s physical characteristics may also influence your decision. With wearable and implantable technology for example, physical dimensions and weight matter greatly but in many environmental monitoring and smart city applications, are irrelevant. Other physical characteristics to consider are the battery’s resistance to moisture penetration, corrosion, puncture resistance, ability to withstand vibration and shock, failure modes, and end-of-life failure mechanisms. Again, these must be considered in the application context. For example, a well-publicized failure mechanism in some lithium batteries is overheating and fire. This may preclude their use in explosive environments or implantable medical devices, but the same failure for an IoT weather sensor on a metal buoy may not pose a significant hazard.

Finally, there are cost and business issues to consider. Beyond the unit, there are shipping, storage, and possibly costs associated with initially charging the battery. You should also consider the availability of multiple vendors, maturity of the technology, likelihood of further investing in the technology, along with end-of-life environmental impact of the particular technology. These also should be considered in the application context, especially when dealing with sensors near water.

Testing Your Battery-Powered Device

Data sheets and marketing materials are helpful, but you will ultimately need to thoroughly test and characterize your device in R&D, validation engineering, and manufacturing. Five devices frequently used for this task are a digital multimeter (DMM), oscilloscope with a current probe, DC power analyzer, precision source/measure unit (SMU), and device current waveform analyzer.

The DMM and oscilloscope are relatively inexpensive instruments frequently found on R&D benches. Their familiar use model can provide quick answers of reasonable accuracy to many questions. Unfortunately, the DMM may not have the bandwidth to catch some fast waveform features, and the oscilloscope with a current probe lacks accuracy and resolution for high-precision measurements.

The DC power analyzer is a modular instrument and when configured with an SMU module, can be ideal for battery drain analysis. This is especially true when the SMU has battery simulation features and supports seamless ranging to measure across a large dynamic range without gaps or glitches.

The precision SMU is also useful, especially in precisely measuring the low sleep currents in which many IoT devices spend most of their time. These devices often have limited display capabilities, and they may lack the bandwidth necessary to properly measure fast waveform features.

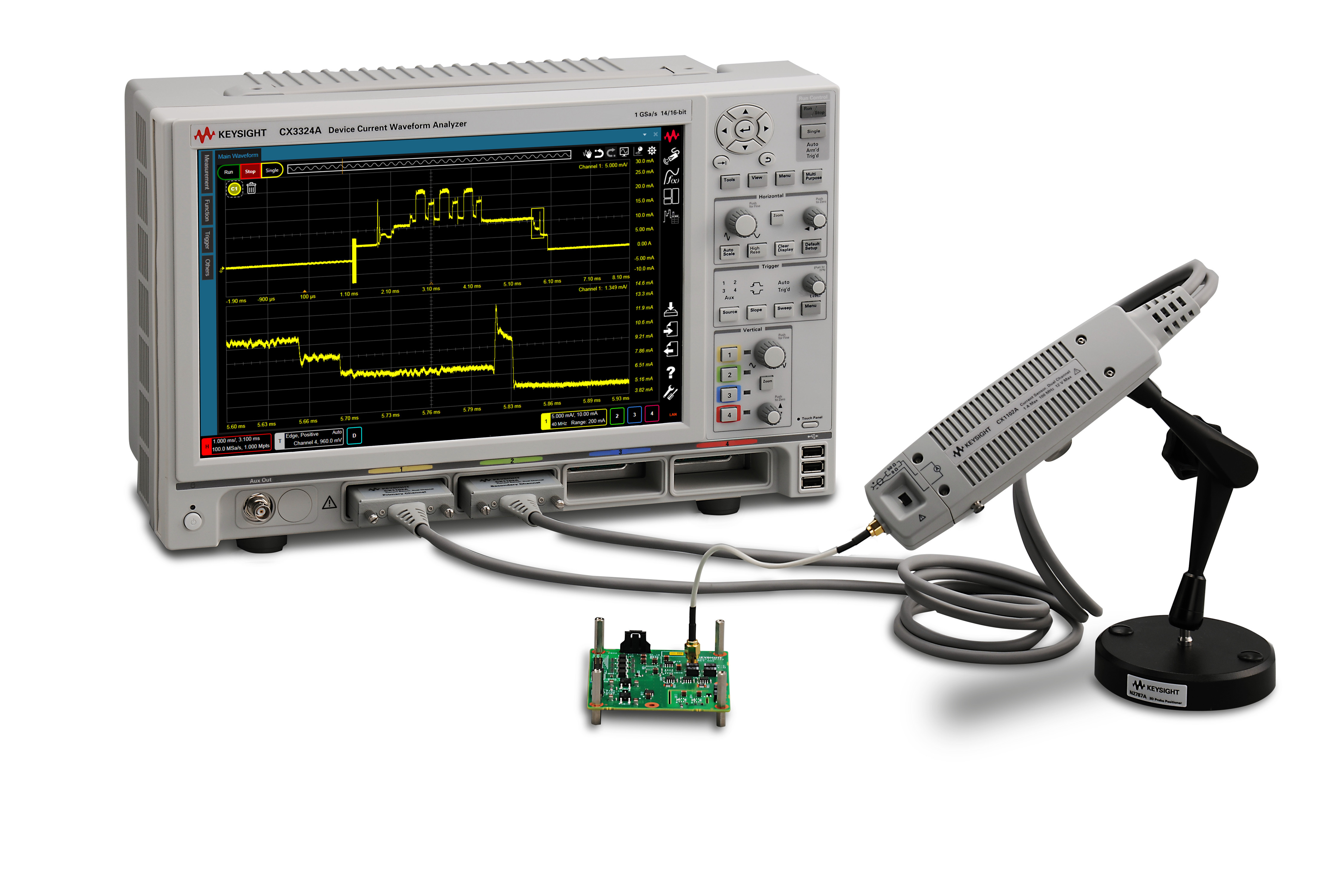

Finally, a new instrument type known as the device current waveform analyzer offers a very strong combination of capabilities.

The device current waveform analyzer has the use model and visualization capabilities of an oscilloscope, but it has much better accuracy and resolution. It also includes sophisticated software features, such as the complementary cumulative distribution function (CCDF), automatic current, and power profiling. These are useful in providing quick answers that complement the instrument’s accuracy and visualization tools.

In summary, there are many aspects of batteries you should consider to make the optimal choice for your battery-powered IoT device. These include electrical characteristics, physical characteristics, and business considerations. You can get much of this information from data sheets and vendor websites, but you must always confirm battery performance with measurement instruments and associated software.

Sidebar—Battery Drain Analysis (BDA) Software Tools

The complementary cumulative distribution function (CCDF) shows how current is consumed at various rates. The horizontal axis is current, usually in mA, and the vertical axis is a decimal number or percentage indicating the fraction of current consumed at currents greater than or equal to the value shown on the horizontal axis. In Figure 2 for example, only 18 percent of current is consumed at rates above 4 mA, and only about 5 percent of the current is consumed at rates above 8 mA.

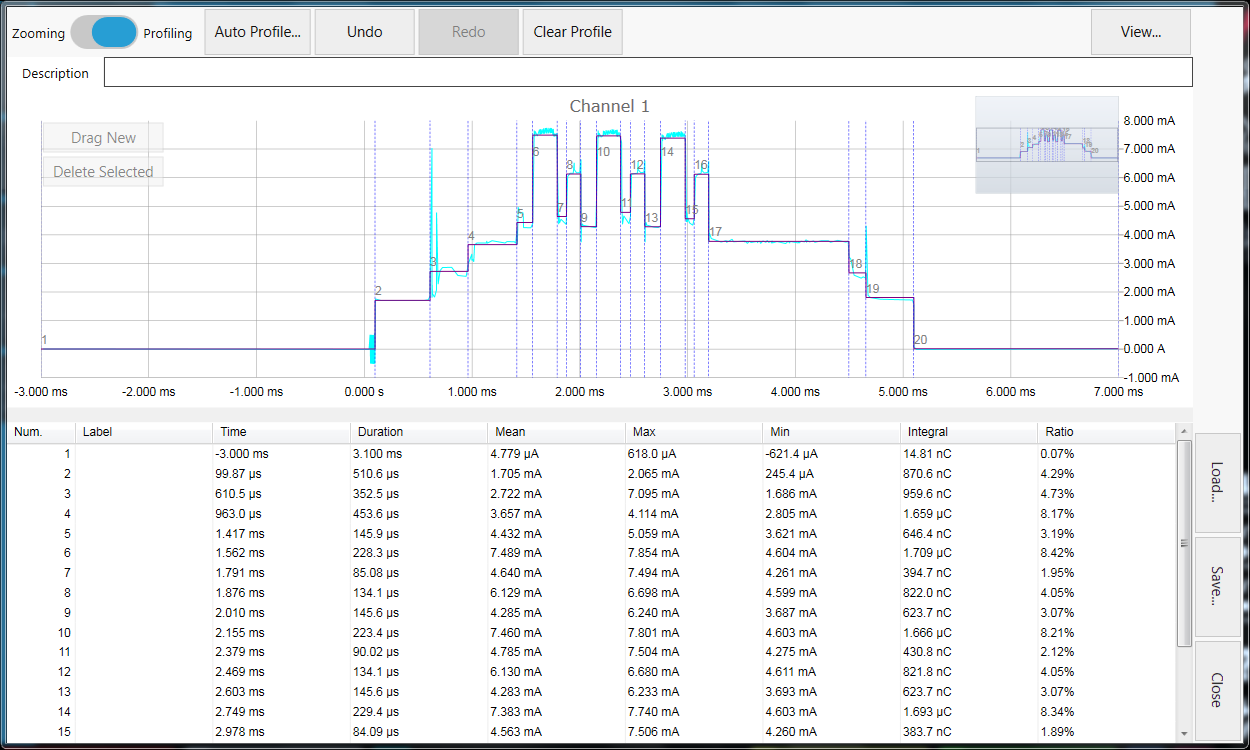

An automated current and power profiler automatically slices a current waveform into time-based segments according to parameters set by the user. It then analyzes the current waveform and for each segment it reports: the beginning time, duration, mean, maximum, minimum current, total charge consumed, and percentage of the total charge consumed. An automated current and power profiler also optionally allows the user to specify names for segments and to manually move segment markers for re-analysis.