One of the most desirable additions to any technology is the killer app—a function or application that makes it indispensable or clearly superior to competing products, and jump-starts sales.

Perhaps the earliest example is VisiCalc, an electronic spreadsheet that debuted in 1979; initially, it only ran on the Apple II and is credited with making the computer a commercial success. Desktop publishing, kicked off by Aldus Pagemaker, had a similar effect on Macintosh sales. Unfortunately, spreadsheets also turned the personal computer into a business tool, which spelled doom for VisiCalc after the arrival of Lotus 1-2-3 on the more powerful IBM PC. As more advanced operating systems added layers of abstraction from the underlying hardware, later killer apps spurred sales in a segment, not necessarily those of an individual machine: examples include Mosaic (the first web browser), email, and, of course, the Google search engine.

FPGAs Seek Their Place in the Sun

This brings us to the FPGA. A high-end FPGA combines a dizzying array of features. Intel’s Stratix® 10 SX, for example, features an FPGA fabric with 5.5 million logic elements, but includes a quad-core 64-bit ARM® Cortex®-A53 running at up to 1.5 GHz: controllers for NAND flash, Ethernet, USB, and I2C; up to 144 Gbps transceivers; plus a suite of security features. Intel’s main competitor, Xilinx’s UltraScale+ FPGAs, offer a similarly broad feature set.

High-end FPGAs target a wide variety of applications including 5G communications, radar processing, data center acceleration, and high-performance computing (HPC). Unfortunately, the FPGA’s flexibility is also its Achilles heel; it’s great for prototyping and limited production runs, but often loses out to more specialized technologies when it’s time to design the high-volume unit. As a result, FPGA manufacturers have long been searching for a killer app that takes advantage of the FPGA’s unique combination of strengths.

Over the years, a succession of candidates has come and gone; now FPGA manufacturers are hoping that artificial intelligence (AI) acceleration might fill that role. Massively parallel graphical processing units (GPUs) dominate the training stage of neural networks, so manufacturers are putting most of their emphasis on the inference portion that enables an already-trained neural network to draw conclusions about new data.

Each type of architecture has advantages and disadvantages, and GPU, CPU, and FPGA architectures are converging as vendors search for the optimum feature set. FPGAs come with hard- or soft-IP CPUs, GPUs, and DSP blocks; CPUs include hardware accelerators and ASICs for cryptographic functions; NVIDIA’s Tesla T4 GPU includes embedded FPGA elements for AI inference applications; and Intel’s newly announced Nervana Neural Network Processor (NNP-I), an AI chip developed for inference applications, will include the 10th-generation Ice Lake CPU core for both general-purpose and neural operations.

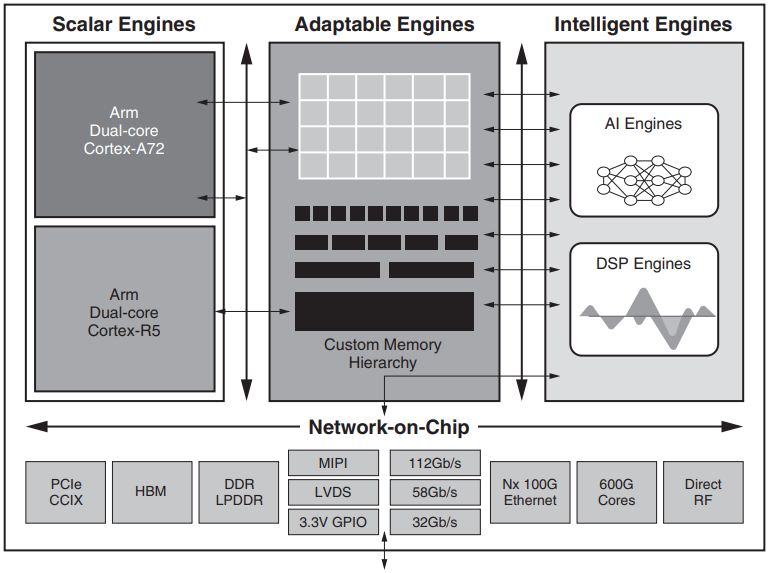

Mindful of this convergence, some FPGA manufacturers are giving themselves a makeover. Speaking at the Xilinx Developer Forum (XDF) in October, Xilinx CEO Victor Peng announced, “Xilinx is not an FPGA company; Xilinx is a platform company,” as he revealed details of the company’s new Versal AI Core. Versal is part of Xilinx’s Adaptive Compute Acceleration Platform (ACAP) that combines a new FPGA fabric—sorry, “Adaptable Engine”—with distributed memory, hardware-programmable DSP blocks, a multi-core SoC, and several programmable, hardware-adaptable compute engines connected by an on-chip network.

FPGA Software Gets an Upgrade

Software has long been a weakness of FPGAs. Developers have traditionally used a hardware description language (HDL) such as VHDL or Verilog to design the FPGA configuration; this requires both coding skills and an in-depth knowledge of the underlying hardware. Contrast this with programming a typical CPU, in which the operating system masks the idiosyncrasies of the microprocessor and its peripherals. GPU programmers also have access to popular languages—C, C++, Python, Java, etc.—thanks to frameworks such as OpenCL & Nvidia’s CUDA application programming interface (API).

Aiming to address this weakness, Xilinx offers multiple programming options for Versal. Data scientists can work with TensorFlow, Caffe, and MXNet frameworks; the Apache Spark analytics engine; and the FFmpeg multimedia software library.

Cloud service providers (CSPs) are offering AI inference as part of their Machine Learning as a Service (MLaaS) products, but it’s also expected to be a key part of edge applications such as autonomous vehicles.

Will AI inference be the long-awaited killer app for FPGA manufacturers? Stay tuned.