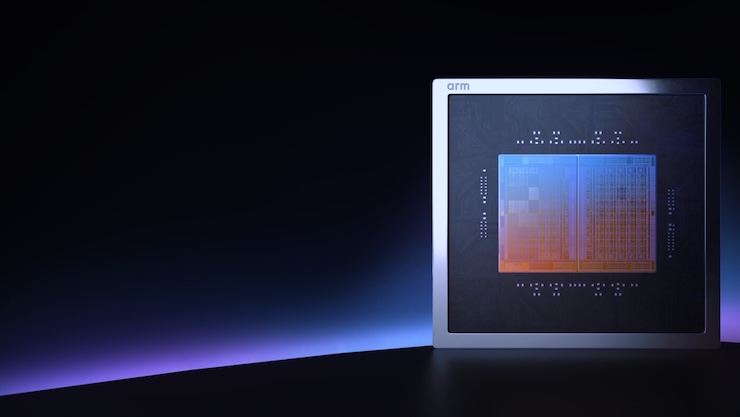

Arm has been licensing IP for over 30 years. Now it’s making chips. The Arm AGI CPU is the company’s first production silicon a data center CPU built around agentic AI workloads.

The specs: up to 136 Neoverse V3 cores, 300W TDP, 6 GB/s memory bandwidth per core at sub-100 ns latency. Air-cooled deployments scale to 8,160 cores per rack; liquid-cooled systems top 45,000. Arm delivers more than 2x the performance per rack compared to x86, claiming up to $10B in CAPEX savings per GW of AI data center capacity.

The specs: up to 136 Neoverse V3 cores, 300W TDP, 6 GB/s memory bandwidth per core at sub-100 ns latency. Air-cooled deployments scale to 8,160 cores per rack; liquid-cooled systems top 45,000. Arm delivers more than 2x the performance per rack compared to x86, claiming up to $10B in CAPEX savings per GW of AI data center capacity.

The architecture assigns a dedicated core per program thread, which means deterministic performance under sustained load — no throttling, no idle threads burning power. That matters when you’re running continuously active AI agents rather than bursty inference jobs. The design also drops the legacy overhead baked into x86, resulting in better utilization of the compute you’re actually paying for. Combined with support for high-density 1U server chassis, the AGI CPU is aimed squarely at operators trying to pack more usable compute into existing power envelopes.

Meta co-developed the part and will deploy it alongside its MTIA accelerators. Other confirmed customers include OpenAI, Cerebras, Cloudflare, and SK Telecom. System builders Supermicro, Lenovo, Quanta, and ASRock Rack have early hardware in the works, with broader availability expected in the second half of 2026. TSMC is manufacturing on 3 nm.

The bigger story here is strategic. Arm is no longer just the foundation others build on. It’s now a direct competitor in the silicon space, at least for this class of workload. Partners have been warned.