Traditional frequency modulation has long been used in broadcast applications, but modern digital communication schemes use various digital versions of FM. Communication parameters for digital communication techniques differ somewhat from those for their analog counterparts. It can be useful to review some of these changes. Perhaps the most basic is communication receiver sensitivity.

For purposes of illustration, consider a basic digital FM technique: Frequency shift keying (FSK) is a relatively simple digital modulation. Binary FSK is a form of FSK where the input signal can have only two different values. Binary FSK resembles conventional FM except that the modulating signal varies between two discrete voltage levels (representing 1 and 0) rather than taking on a continuously changing value such as a sine wave.

Binary FSK is the most common form of FSK. Here, the frequency of the carrier shifts according to the binary input signal. Consequently, the output from an FSK modulator is a step function in the frequency domain. As the binary input signal changes from a logic 0 to logic 1 and vice versa, the FSK output signal shifts between two frequencies.

For a conventional analog receiver, the receiver sensitivity is the weakest signal that a wireless receiver can receive and which humans can generally discern. Analog receiver sensitivity is generally measured by monitoring the SINAD (Signal to Noise and Distortion) level as the RF signal power drops. The RF input level resulting in 12 dB SINAD is typically considered the specified sensitivity of the receiver. In contrast, the sensitivity of a digital receiver involves a bit error rate. Input signal power is expressed in dBm while bit errors are expressed as a rate, usually 10-3 or one error per every 1,000 bits.

Unfortunately, bit errors can be defined in several different ways. Perhaps the most obvious way is to count the total actual number of demodulated pulses over a given interval of time and compare them to the total theoretical number of demodulated pulses over the same interval. This approach isn’t considered acceptable because it does not detect changes in pulse width. As the received RF signal grows weaker, changes in pulse widths become greater and more inconsistent.

The concept of jitter is a way of dealing with such issues. For the case of demodulated data from an RF receiver, jitter is the unwanted variation of phase or pulse width in the demodulated signal. Jitter is timing error or deviation from the true periodicity that an ideal clock signal would exhibit. Alternately, it can be measured and displayed as spectral density variations.

Absolute jitter is the displacement of the cycle’s rising or falling edge with respect to its ideal position in time. Period (cycle) jitter is the difference in duration between a given clock period and the average (ideal) clock period. Cycle-to-cycle jitter is the difference in duration of adjacent timing intervals. Unit interval (UI) in telecommunications quantifies jitter with respect to some fraction of the transmission period. Picoseconds, degrees and radians are used to measure these phenomena.

Jitter characterization is handled in various ways. For example, most public safety digital radios have a test mode providing BER measurement and display for a few BER test patterns. One test pattern called the 1011 Hz pattern is a near Pseudo-Random Binary Sequence (PRBS) that also provides an audio tone output. The tone indicates to anyone listening that the communication channel is out of service, similar to the situation with the 1 kHz tone for SINAD testing of an analog receiver.

In cases where there is no built-in BER test mode, the receiver must output the received data bit stream so a test system can compare the transmitted data pattern with the received pattern. Typically the sensitivity of the receiver is the RF signal level for which the measured BER is 5%.

Though digital receiver sensitivity is specified and measured with just a single test signal, real-world receivers are impacted by a wide variety of signals. In testing and predicting receiver performance in these real-world situations, the fundamental measure is still typically the 5% BER level.

One problem with receiver testing is that the desired signal level is near the receiver sensitivity level. Interfering signals must be generated and combined with as little distortion as possible, process that can be tricky. Another problem is that digital modulation is highly “noise like.” If displayed on a spectrum analyzer, the level will change with the resolution bandwidth setting. Consequently, it is possible to find signal analyzers specifically optimized for these sorts of tests. The Anritsu MS2830A Signal Analyzer is one example.

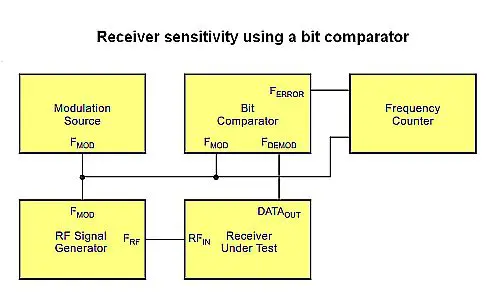

Unfortunately signal analyzers of this caliber can be on the pricey side. Another approach described by Microchip avoids that need for this instrument. The technique performs sensitivity measurements using an RF signal generator, a modulation source, a bit comparator circuit, and a frequency counter. The bit comparator is a custom design that Microchip seems to have cobbled together on a work bench. It generates a pulse, called FERROR, whenever the demodulated signal, FDEMOD, exceeds the jitter window (set to ±25% as default) with respect to a reference signal, FMOD. The BER is calculated by dividing the bit comparator’s error signal, FERROR, by the reference signal, FMOD. Additionally, Microchip gave the bit comparator provisions to enable the adjustment of the jitter window from ±10% to ±55%. The modulation source consisted of a square wave signal whose duty cycle was maintained at 50% and whose frequency was varied.

Unfortunately signal analyzers of this caliber can be on the pricey side. Another approach described by Microchip avoids that need for this instrument. The technique performs sensitivity measurements using an RF signal generator, a modulation source, a bit comparator circuit, and a frequency counter. The bit comparator is a custom design that Microchip seems to have cobbled together on a work bench. It generates a pulse, called FERROR, whenever the demodulated signal, FDEMOD, exceeds the jitter window (set to ±25% as default) with respect to a reference signal, FMOD. The BER is calculated by dividing the bit comparator’s error signal, FERROR, by the reference signal, FMOD. Additionally, Microchip gave the bit comparator provisions to enable the adjustment of the jitter window from ±10% to ±55%. The modulation source consisted of a square wave signal whose duty cycle was maintained at 50% and whose frequency was varied.

Manchester encoding is a commonly accepted data bit encoding standard for low-cost and low data rate RF systems. In many applications, Manchester yields optimum receiver performance by virtue of the characteristic average dc level of 50% that is present on the demodulated signal.

It’s possible to use the Microchip technique to demonstrate several qualities of digital receivers. For one, receiver sensitivity is a function of the transmission data rate. Consistent with theory, as data rate goes down, receiver sensitivity goes up. Theoretically, doubling the data rate reduces sensitivity by 3dB.

It’s possible to use the Microchip technique to demonstrate several qualities of digital receivers. For one, receiver sensitivity is a function of the transmission data rate. Consistent with theory, as data rate goes down, receiver sensitivity goes up. Theoretically, doubling the data rate reduces sensitivity by 3dB.

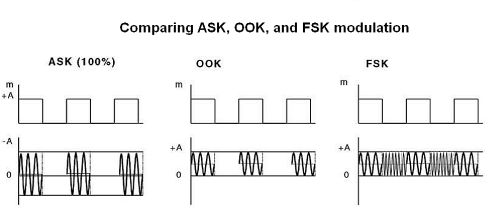

Also evident from sensitivity tests are differences between Amplitude Shift Keying (ASK) and FSK. ASK represents digital data as variations in amplitude rather than frequency changes of a carrier wave. On-Off Keying (OOK) is a special form of ASK where no carrier is present in a transmission of a “zero.” By definition, OOK sensitivity is 6 dB lower than ASK sensitivity due to the lower peak value of transmitted power. However, in reality, OOK modulation is most often used when describing an ASK modulation. ASK modulated signals yield approximately 7 dB better sensitivity than FSK modulated signals at similar data rates. However, OOK (the most commonly used form of ASK), offers little improvement in sensitivity compared to FSK modulated signals.