Computer and machine vision based on video signal processing and analysis is a critical function in systems like autonomous vehicles, medical imaging diagnostic equipment, facial recognition and eye tracking applications, smart cities, supply chain management, and robotics. It requires rapid and accurate object and feature recognition and extraction. Implementation of computer vision is complex and can benefit from using artificial intelligence (AI), machine learning (ML), convolutional neural network (CNN) inferencing engines, and other advanced computing techniques.

Designers of computer vision systems need tools that assist in merging high-performance hardware and software. Sources where designers can get the needed electronic design automation (EDA) tools include component suppliers, general and specialist EDA tool makers, and even focused industry organizations that offer open-source EDA solutions. This first of two FAQs is focused on tools available from component suppliers and EDA tool makers. Part two explores the EDA tool options from industry organizations.

Component suppliers

Video processing is a hardware-intensive activity and component suppliers like Nvidia and Intel offer EDA tools including software development kits (SDKs) to assist designers in using their silicon. Nvidia, for example, offers a collection of tools for developing accelerated video processing using its graphics processing units (GPUs). Some of the available tools include:

- RTX Broadcast Engine SDKs that use AI to transform live streams.

- Optical Flow SDK is dedicated to computing the relative motion of pixels between images.

- GPUDirect for Video helps IO board designers develop drivers for transferring video frames in and out of Nvidia GPU memory.

- Capture SDK for capture and video compression.

- VRWorks 360 Video SDK enables developers to capture, stitch, and stream 360-degree videos.

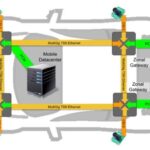

Intel also offers an extensive suite of intellectual property (IP) tools for video, image, and vision processing using their field programmable gate arrays (FPGAs). Intel’s Video and Image Processing Suite of IPs includes cores that range from simple building block functions, such as clocked video and color space conversion to complex functions that can implement programmable polyphase scaling, arbitrary non-linear distortion correction, 3D look-up tables, adaptive tone mapping, and so on (Figure 1).

These IPs use Intel FPGA streaming video data interfaces for video I/O, based on the standard AXI4-Stream protocol. Designers can mix and match the Intel video IPs with their own proprietary IPs for unique applications. In addition, custom image processing signal chains can be developed using Intel’s Platform Designer SKD to automatically integrate embedded processors and peripherals and generate arbitration logic.

Toolmakers

Designers can also select from focused video processing EDA tools as well as offerings from broad-line EDA tool makers. For example, MVTech has a range of tools for implementing machine vision in agriculture, robotics, logistics, printing, manufacturing, and other applications. HALCON is MVTech’s comprehensive SDK for machine vision and has an integrated development environment (IDE). Among the tools provided are:

- Deep Counting can be used to count many objects quickly and accurately as well as to detect their position.

- Deep OCR reads texts reliably, regardless of their orientation and font. It can identify text with arbitrary printing type or unseen character types as well as support improved readability in noisy and low-contrast environments.

- 3D Gripping Point Detection can be used to detect surfaces suitable for gripping on any object.

General tool makers like MathWorks also offer algorithms and EDA tools that can support a variety of hardware environments for processing, analyzing, and presenting video information. The MatLab Computer Vision Toolbox supports the design and testing of computer vision, three-dimension (3D) vision, and video processing applications.

Designers can use deep learning (DL) and machine learning (ML) algorithms like you only look once (YOLO), single shot detector (SSD), and advanced custom fields (ACF) to develop custom object detection functions. DL algorithms like U-Net and Mask recursive convolutional neural networks (R-CNNs) are available for semantic and instance segmentations. Segmentation and detection algorithms are also available to analyze images that are too large to fit into the available memory. In addition, pre-trained models are available for detecting common objects, pedestrians, and faces.

The algorithms can be used on GPUs or multicore processors for faster performance. The Toolbox supports C/C++ code generation to speed integration with existing code libraries in embedded systems. Designers can automate calibration workflows for single, stereo, and fisheye cameras. Visual and point cloud simultaneous localization and mapping (SLAM) are supported as well as stereo vision and structure from motion analysis. Algorithms are available for object detection and tracking and feature detection, extraction, and matching (Figure 2).

Summary

Machine and computer vision is important in a wide range of industrial, transportation, medical, and consumer applications. Designing vision systems is complex and requires a mix of high-performance hardware and sophisticated software. Fortunately, there’s a variety of EDA tools available from component suppliers, dedicated EDA tool makers, and even focused industry organizations. Tools from focused industry organizations will be the topic in part 2 of this series.

References

Computer vision toolbox, MathWorks

Machine vision software, MVTech

Video and Image Processing Suite, Intel

Video Processing Technologies, Nvidia