Accuracy as it relates to the measurement of electrical signals would seem to be a simple, straight-forward concept. In the simple case, a reading as shown in a digital display could be compared to that of a perfectly accurate instrument. The discrepancy, if any, could be expressed as a fraction of a percent.

The difficulty with this scenario, however, is that it presupposes the existence of a perfectly accurate instrument. But there is no such thing. Moreover, quantum mechanics asserts that at the smallest and most fundamental level, physical phenomena themselves do not have simultaneous identifiable inertia and position values. The very concept of accuracy can be problematic.

Is there a way out of this dilemma? Well, while there’s no possibility of absolute certainty in our wave-function world, there is no reason that we cannot approach ideal limits ever more closely, and this should suffice for any real-world application. In fact, there are several possible approaches.

One of these is to lock onto a physical phenomenon that exhibits one relatively unvarying parameter and to calibrate a parent instrument to it. A frequency standard, for example, is a stable oscillator that generates an unperturbed fundamental (the harmonics actually can be quite useful) that varies with respect to time by a tiny amount. An RC electronic device does not conform to this definition. The output is highly temperature and age-dependent, and either would make it unsuitable as a frequency standard.

For a truly stable frequency standard, we can look to the cesium atomic clock. In this instrument, the electronic transitions between two hyperfine ground states of cesium-133 atoms are used to control the output frequency. The relevance of this in the electronics laboratory: Because the reciprocal of frequency is time, the second can be defined based on the cesium frequency standard.

The cesium atomic clock frequency is exactly 9,192,631,770 Hz, well up into the gigahertz range. The reason that we can use the word “exact” in this context is that the second is actually, in SI metrics, defined in terms of this highly stable frequency. In 1960 when the definition was adopted, it agreed according to then-current measuring technology with the previously observed astronomical second based on Earth’s orbit around the sun.

Electrical parameters – amps, volts, ohms and consequently Watts – are related to one another in ohm’s law: E = I x R. The Watt also falls into this scheme because it is defined as the product of volts and amps. But all this remains abstract until one of these parameters is nailed down to some actual phenomenon, which happens to be the aforementioned cesium standard.

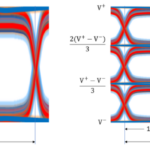

The way this relationship to cesium frequency comes about is by definition. The amp has been defined as the constant current that will produce an attraction force of 2 x 10-7 N/m of length between two straight parallel conductors of infinite length and negligible cross section, placed one meter apart in a vacuum. A rougher and yet unimaginably precise definition of an amp is that it is the amount of current flowing through a conductor when 6.2415093 x 1018 electrons move pass a given point per second.

With this definition and that of the second as it relates to a cesium oscillator, the basic electrical parameters are defined to a high degree of accuracy. Additionally — but as isolated exercises so that we do not fall into the error of over-determination — the volt can be tied to the Josephson junction and the ohm to the quantum Hall effect. Both of these effects are easier to realize in the laboratory than counting electrons to define the amp.

Research by physicists at the National Institute of Standards and Technology (NIST) led to the development in the past decade of the quantum logic clock, based on an aluminum spectroscopy ion with a logic atom. This clock employs laser-cooled individual ions that are confined in an electromagnetic trap. The instrument tracks time by means of ion vibration that is 100,000 times higher than previously-used microwave frequencies. Its accuracy cannot be ascertained because it is way off the charts, beyond the perceived horizon of any previous standard. This awesome accuracy is because the ions are immune to ambient magnetic, electrical and thermal effects, which cannot be totally eliminated for earlier clocks by means of extensive shielding and environmental controls.

Laboratories and product development facilities are in the business of making accurate measurements, often many times daily. When a high-end instrument such as an oscilloscope is purchased, it comes with a certificate of traceable calibration. If the instrument is sent in for repair, it will be recalibrated and a new certificate issued. Additionally, there is a recommended recalibration interval, typically 12 months, and a due date is included. An instrument fresh from calibration will have a seal that must be broken to open its enclosure, voiding the calibration.

There are numerous calibration laboratories throughout the world. These labs differ widely in capability, largely depending on the quality and type of their in-house frequency standard. This primary or house standard is continuously running, and depending on the type, it must be checked against the NIST standard to see if it has been subject to drift.

This cesium atomic clock operates to an uncertainty of one second in 30 million years.

A rubidium secondary oscillator.

The types of frequency standards generally used are quartz oscillators, atomic oscillators and instruments that are “disciplined” to agree with Global Positioning Satellite (GPS) or other outside sources. The house standard is maintained in a central, carefully controlled location, and its output is conveyed to areas where technicians perform the calibrations. Typically, a 10 MHz sine wave goes from the house oscillator to a distribution amplifier and thence to the individual benches. This is the essence of a calibration lab.

The atomic oscillators may be rubidium or cesium. Quartz oscillators are less expensive and less stable and accurate than rubidium and cesium oscillators. All three types are exceptionally user-friendly and simple to operate in bench-type or rack-mount versions, but they all require maintenance and periodic recalibration to the NIST standard if authentic traceability is to be maintained.

The important task of maintaining traceability can be effectively accomplished by means of an impressive technology that consists of tethering the house standard to the Global Positioning System, which ue a highly accurate oscillating signal that is broadcast to overlapping footprints throughout the world. This mechanism works well to maintain traceability, although it is vulnerable to failure if the building-mounted antenna is damaged (wind, stray bullets) or the transmission cable is damaged (squirrels, a failed termination).

Another type of instrument that is extremely accurate is the hydrogen maser, but it is far too expensive to be used in calibration labs. Its use is confined to specialized research laboratories and national metrology facilities such as NIST.

The hydrogen maser references intrinsic properties of the hydrogen atom to construct a highly accurate frequency standard. This instrument takes advantage of the fact that the single proton and the single electron of a hydrogen atom both have spins which may be in the same or opposite directions. The hydrogen atom is at a higher energy state if these two particles are spinning in the same direction. It has a lower energy state if they are spinning in opposite directions.

To reverse the spin of the electron, the amount of energy required is equal to a photon oscillating at exactly 1,420,405,571.786 Hz. Because the electron’s direction of spin is qualitative rather than quantitative, it can be correlated to the above frequency. With a lot of related instrumentation and equipment, that unvarying frequency can be captured at a cost of about a quarter-million dollars.

Generally, calibration labs avoid quartz oscillators. While their short-term stability and accuracy are acceptable, they can without warning go out of spec and if this is undetected, there is a big problem for affected customers.

The post In search of accuracy: Instrument calibration using frequency standards appeared first on Test & Measurement Tips.