Since the late 1970’s, digital technology has dominated the field of computing. Left behind for most applications was the analog computer, a tool that had existed in purely mechanical forms since ancient times (think the Antikythera mechanism, astrolabe or even the humble slide ruler). Before the digital revolution, analog computing offered a lot of promise and was used in many research pursuits, primarily for military and nuclear applications. Electrically-based analog computers first appeared in the early 1900’s with the introduction of electro-mechanical hybrid differential analyzers. As the century progressed and with the advent of electronic technologies like operational amplifiers analog computers such as the Heathkit EC-1 could be purchased for less than $200 by the early 1960’s.

By the following decade transistor miniaturization and thus the digital computer became dominate. Analog still has its place, for example, most sensors are analog based. However, their output is often digitized via analog-to-digital converters (ADCs) before being used in digital computations. Today though, there is much discussion around a second generation of analog computing. Or, more appropriately, a new take on analog-digital hybrids, this time digital giving way to applications where analog computations are more effective or efficient. So, what are some of the changes driving this paradigm shift?

Neural Networks: The human brain boasts perhaps the most impressive computational performance-versus-power of any computer we have and, yet it is most decidedly nothing like the Central Processing Unit (CPU) of our desktop computers. For the record, the average brain burns about 20 watts yet can perform the equivalent of about 2 billion megaflops. Today’s research and products based on the concept of neural networks such as Google’s TensorFlow have led a shift in the computational engine from CPUs to GPUs (Graphical Processing Unit) and now onto custom ASICs. In Google’s case, these have been dubbed as Tensor Processing Units (TPUs). TPUs being better suited for machine learning applications, much as GPUs are better suited for graphics handling than the CPU. But all are still fundamentally digital. If you would like some hands-on experimentation with neural networks, check out the TensorFlow Playground.

Analog processing would yield results more efficiently but still not without their fair share of issues. For one, analog signals, in general, are prone to errors induced by electrical noise which leads to concerns about output accuracy. Also, the notion of programming a digital computer is something we take for granted. Programming in the analog domain has proven far more challenging to accomplish. Just last year MIT and Dartmouth College announced a first attempt compiler for an analog computer at a conference of the Association for Computing Machinery. Still, for applications where there is an acceptance of “good enough” such as speech and image recognition, low-powered yet effective analog processing might just be the answer.

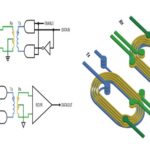

Memristors and Resistive Memory: Once only a theoretical possibility, memristors are today a reality. Memristors are a fundamental component just like the resistor, inductor and capacitor. The unique property of the “memory resistor” is that its resistance varies based on the amount and the direction of the current flow that passes through it. Interestingly, the memristor “remembers” its resistance even after it is disconnected from a powered circuit. In other words, it’s not discrete memory but rather a continuous memory that makes it perfect for analog computational applications.

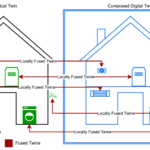

Biomimicry: Building A Better Mousetrap. Lastly, autonomous robots seem to be a likely consequence of work being done today of drones, machine learning and autonomous vehicles. Today, all are heavily dominated by digital computation. But looking to mother nature, we may just find that building technological analogs of certain less developed animal species might be a way to create autonomous robots capable of performing low-level yet necessary functions such as pollinating farmlands or hunting the skies and oceans for threats. Energy efficiency and rapid, continuous operations will be essential to such systems and analog computing seems poised to be able to deliver on all counts. Of course, any system, biological or otherwise, that can be modeled as a set of differential equations makes it an ideal candidate for analog computation.

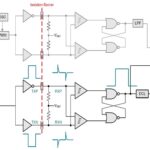

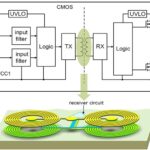

As the pendulum swings back from purely digital computational engines, it will be interesting to see which applications remain digital and which give way to new generation analog processing technology. It seems at a minimum that new generations of integrated circuits will contain at least an analog compute engine embedded amongst more traditional digital microprocessor and microcontroller hardware. This allows application designers to pick which processes would be more efficiently processed in an analog format. For traditional digital computer engineers that have been long living in a CMOS world, it would seem that now is a great time to dust off those analog circuit textbooks.

Leave a Reply

You must be logged in to post a comment.