The automotive ADAS designers tout the up-and-coming LiDAR detection technology, coupled with mmWave radar and the camera, to support autonomous vehicle design further. But that is not the complete LiDAR story.

The automotive ADAS designers tout the up-and-coming LiDAR detection technology, coupled with mmWave radar and the camera, to support autonomous vehicle design further. But this is not the complete LiDAR story. Let’s see what happens when engineers get this new toy. This technology, combined with GPS, is finding a home in agriculture, astronomy, oceanography, cameras, and transport industries.

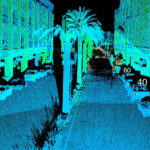

From smart cities to autonomous machines, companies in the public and private sectors are looking to leverage the benefits of 3D perception. To fill the 3D void, application developers are turning to LiDAR to replace legacy sensing technologies or to create solutions that were once unachievable. Unlike 2D-based radar and the 1D camera perception, LiDAR produces highly detailed, accurate spatial measurements, working in various environments including at night and under direct sunlight.

A little about LiDAR

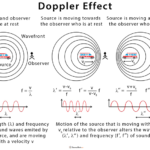

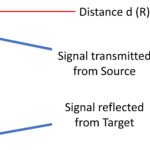

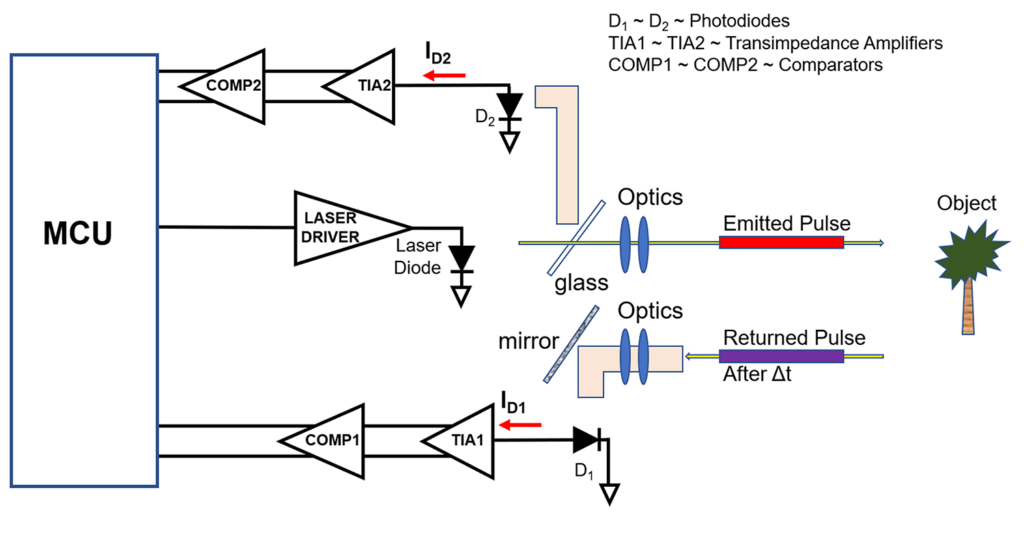

LiDAR stands for light detection and ranging. It pings a digital laser signal off objects and measures the return time to the source (Figure 1).

LiDAR technology complements the electronic camera and radar instrumentation to create the fusion-sensor picture, where the multiple bursts of the LiDAR signal produce a colorless picture with depth and accuracy.

The LiDAR we know – automotive

The prominent industry’s push for vision electronics comes from the automobile industry. In the automobile, the one-dimensional (1D) camera provides vision and, with software, delivers high-definition color data to help with object classification. The radio detections and ranging or radar produce low-resolution two-dimensional (2D) data while providing unique spatial insight, object range, and velocity tracking.

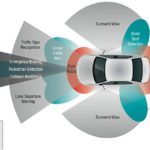

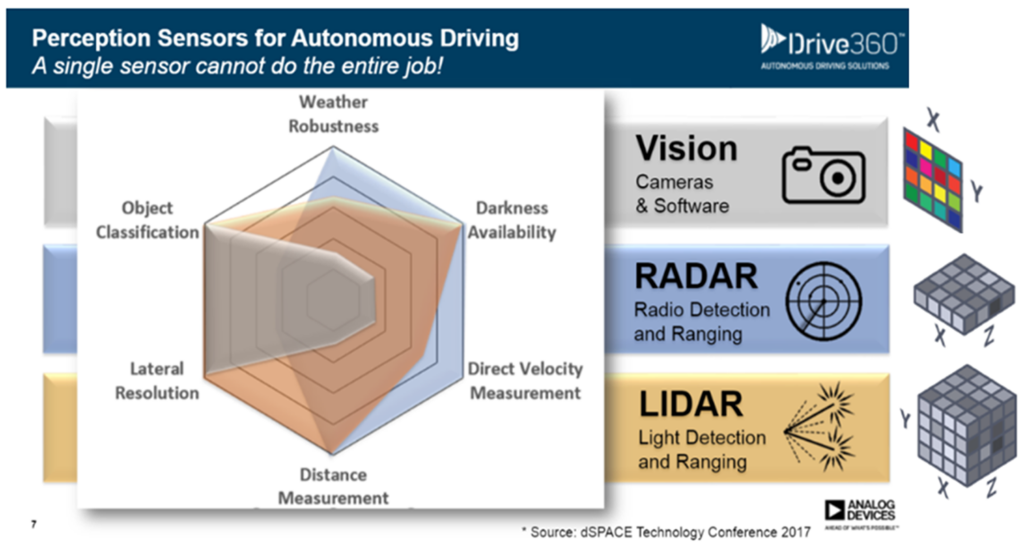

The LiDAR equipment rounds the images with a three-dimensional (3D) perspective to bring clarity and shape definition (Figure 2).

In Figure 2, it’s clear how this combination of visual electronics produces depth into six environmental characteristics.

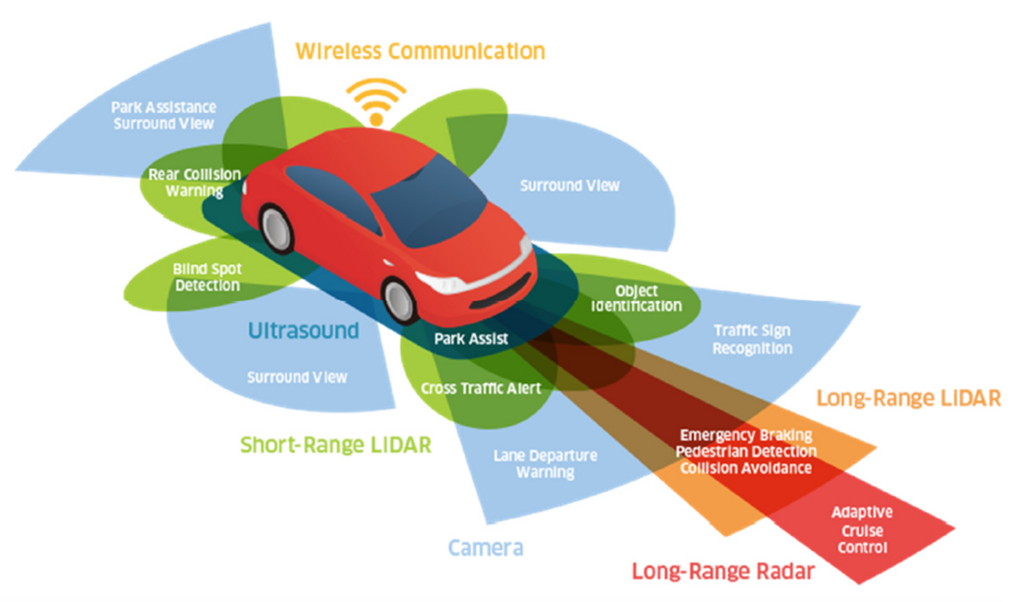

LIDAR is one of the three sensor types that give information to the automobile’s computing power through artificial intelligence (AI) platforms. This platform uses sensor fusion to create a comprehensive 3D map of the vehicle environment and a classification of the objects. The complete suite of autonomous vehicle 3D-sensing technologies includes the camera, radar equipment, and LiDAR (Figure 3).

The B&W and color cameras provide passive visual sensing. The 360-degree camera view uses sophisticated object-detection algorithms to assess the local environmental context, such as traffic signs and signals. The cameras are also helpful for lane-departure warnings.

The radar equipment uses millimeter radio waves at 24, 77, or 79 GHz for detection, localization, object range, and velocity tracking. The radar signal performance is helpful in extreme weather conditions and up to 200+ meters.

Long-range radar (LRR) detects objects and hazards at high speeds and long-distance highway driving, as a good fit for automatic distance control or adaptive cruise control and emergency braking. The applications for short-range radar are blind-spot monitoring, lane-change assist, park assist, and rear-end collision warning.

LiDAR automotive applications use long-range and short-range detection techniques. The uses of the long-range LiDAR (up to 200 m) are for object detection, localization, and identification/classification. In addition, long-range applications include pedestrian identification, collision avoidance, and emergency braking. In comparison, LiDAR’s poor weather performance is worse than radar. As an advantage, LiDAR has high angular and linear resolution.

Short-range LiDAR monitors the immediate surroundings of a vehicle (e.g., around the bumpers). Unlike short-range radar, short-range LiDAR detects objects’ presence and identification. For example, it recognizes the differences between a fire hydrant and a child on a sidewalk.

The off-road LiDAR

LiDAR technology is used in several applications outside of the automotive industry, such as for camera enhancement, mapping, accurate elevation measurements, bathymetric, and urban utility planning.

Let’s delve into some of these interesting LiDAR spin-offs.

iPhone camera enhancement

The iPhone is at the top of my list, where the LiDAR sensor enhances low-light photography and leads to 6x faster autofocus in Night Mode. This camera feature comes with the high-end iPhone 12 Pro, iPhone 13 Pro, and Apple’s iPad Pro models.

In addition, the LiDAR feature allows users to capture higher-fidelity 3D images by introducing highly accurate depth information and edge clarity.

Identifying the LiDAR sensor in the iPhone is relatively easy (Figure 4).

On the backside of the iPhone, there’s a little black dot in the camera array. This dot is about the size of the flash and is the LiDAR sensor, which carries depth-sensing that makes a difference in photos, AR, and 3D scanning.

Mapping

LiDAR technology with GPS capability assists in mapping, road mapping, and planning. Various researchers and experts use LiDAR to obtain an area’s 3D image representation that precisely maps an object. This gives more object or map details in urban and rural areas than other survey methods.

Unlike surveying and photogrammetry methods, LiDAR collects faster, more accurate 3D data and gives the exact map of a given area. This helps to create stable road designs that are free of design flaws.

What type of areas will you find LiDAR mapping? Mapping a forest or river with the exact dimensions is used for planning roads, bridges, and related construction. In the transportation industry, engineers rely on LiDAR technology to map the structure of the road and plan its location. It also helps determine the road’s length and direction against the terrain structures.

I have also seen a drone use a camera, LiDAR, and GPS to document an automobile accident’s aftermath.

The lunar laser and terrestrial ranging capabilities take mapping to an extreme. LiDAR replaces cumbersome, inaccurate distance measurements between the moon and earth with precise 3D B&W maps.

But let’s go beyond earth’s moon. LiDAR technology precisely draws the topography of Mars. At the speed of light, the laser pulses travel to Mars and return the data to earth to create a 3D model of the planet.

Digital elevation models

Back on earth, the digital elevation model (DEM) measures the elevation values in various industries, including construction and other surface positions. As opposed to digging out the plans, LiDAR technology makes it easy to capture and measure the elevation height of all buildings, electrical, piping, or structures. As opposed to other survey methods, LiDAR technology makes measuring such items’ heights easier, faster, and less costly.

Bathymetric mapping

Bathymetric, or submarine topography, is the study of the depth of lake or ocean floors. LiDAR laser penetrates water to the depths and then reflects the exact distance between the water surface and the bottom of the lake or ocean. This technology is handy when determining underwater structures such as cliffs, valleys, sunken ships, and underwater pipes and conduits.

Urban utility planning

LiDAR technology is instrumental in urban utility structure planning, such as terrain surveillance and electric grid mapping. The LiDAR pulses identify the position on the surface or underground of the electric grids and monitor the sagging of the electric wires.

Endless LiDAR applications

LiDAR technology is like a toy for imaginative engineers. It complements cameras, radars, and GPS to produce compelling 1D, 2D, and 3D color images of the world. Consider measuring glacier thickness and movement, identifying potential bridge faults by penetrating the bridge structure, police officer speed guns, and determining and predicting tsunamis.

So now, your imagination might be set into action. What does your end-result LiDAR device look like?