At the 2021 (virtual) IEEE Microwave Symposium, the industry looked at where wireless is going and how it will get beyond 5G.

RF and wireless engineers remain on the forefront of connectivity. After all, everyone wants their phones to do everything; industry wants to control processes without wires. While wires and fibers hold the network together, future connectivity will go more and more towards wireless.

The 2021 IEEE International Microwave Symposium (IMS) exhibition took place live in Atlanta from June 7-10, but most of the conference content occurred online from June 20 to 25. On Wednesday, June 23, IMS featured an all-day Connected Future Summit, formerly called the 5G Summit that began in 2017. For 2021, the summit covered topics such as 5G standards, 5G Advanced, Wi-Fi, 6G, materials, manufacturing, and test. Shahriar Shahramian from Nokia Bell Labs introduced the speakers and moderated a panel discussion.

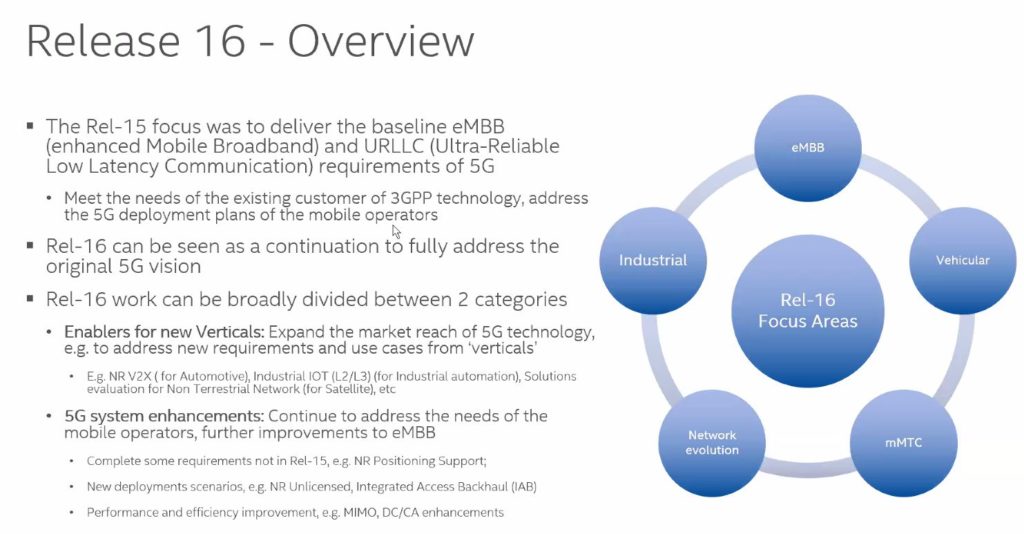

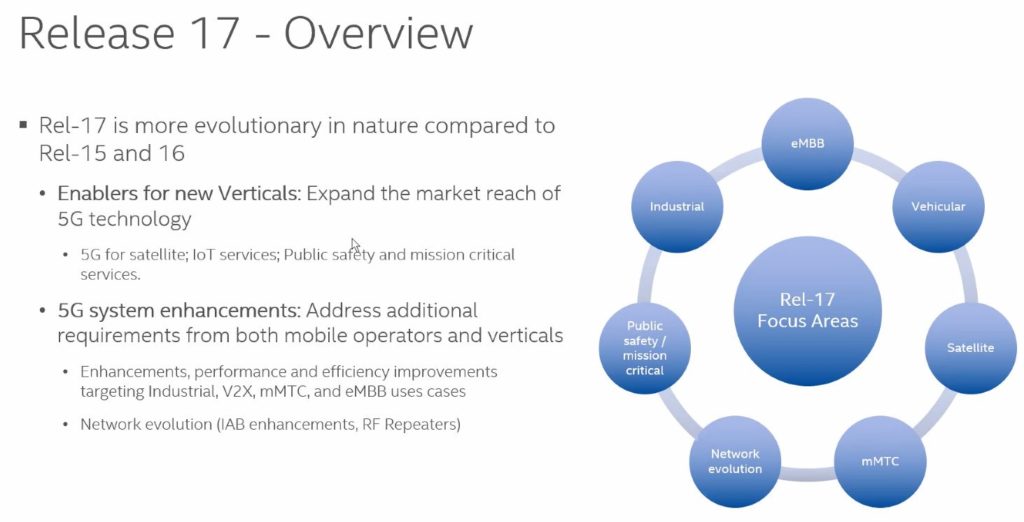

Richard Burbridge of Intel provided an update on 3GPP standards. “Release 15 was a baseline,” he said. “Release 16 added functionality and release 17 will be evolutionary.” Burbridge touched on Release 18, as technical discussions begin the week of June 28.

Releases 16 and 17 add URLLC and IIoT, respectively. Other features include:

- time-sensitive networking (TSN).

- IIoT sensors

- Wi-Fi over 5G

- Support for positioning accuracy (10 m outdoors, 3 m indoors)

- mmWave FR2 expansion to 71 GHz, including at least 5 GHz of unlicensed spectrum

- Private networks

- Integrated Access and Backhaul (IAB), with includes wireless backhaul

- V2X for traffic management

- MIMO enhancements for increased performance

- Satellite communications that bring 5G broadband and IoT to areas without terrestrial service, including geosynchronous orbit and low-Earth orbit satellites. “Delay is an issue,” he noted.

- Reduced-capability devices (Release 17)

Figures 1 and 2 highlight Releases 16 and 17. Click images to enlarge.

Figure 1. Intel’s Richard Burbridge highlighted the enhancements that 3GPP Release 16 brings to 5G. Source: IEEE/IMS2021

Figure 2. 3GPP Release 17 adds satellite, IoT, public services, massive machine-to-machine communications, and public safety communications to 5G.

Burbridge also noted how COVID-19 changed the was 3GPP does business. It’s slowed things, resulting in Release 17’s expected completion in March 2022. Everything takes longer when all the participants aren’t together in one location.

Intel’s Carlos Cordiero followed Burbridge, looking at Wi-Fi 6/6E and 7. He also noted that in 2030, we will continue to use several wireless technologies such as mmWave cellular, Wi-Fi-Bluetooth, Ultra Wideband (UWB), and satellite. Figure 3 highlights them.

Figure 3. By 2030, we will use even more wireless techologies than we use today. Source: IEEE/IMS2021

Focusing on Wi-Fi, Cordiero explained how Wi-Fi 6 increases performance. Enhancements include OFDMA, 1024 QAM, additional MIMO support, and scheduling.

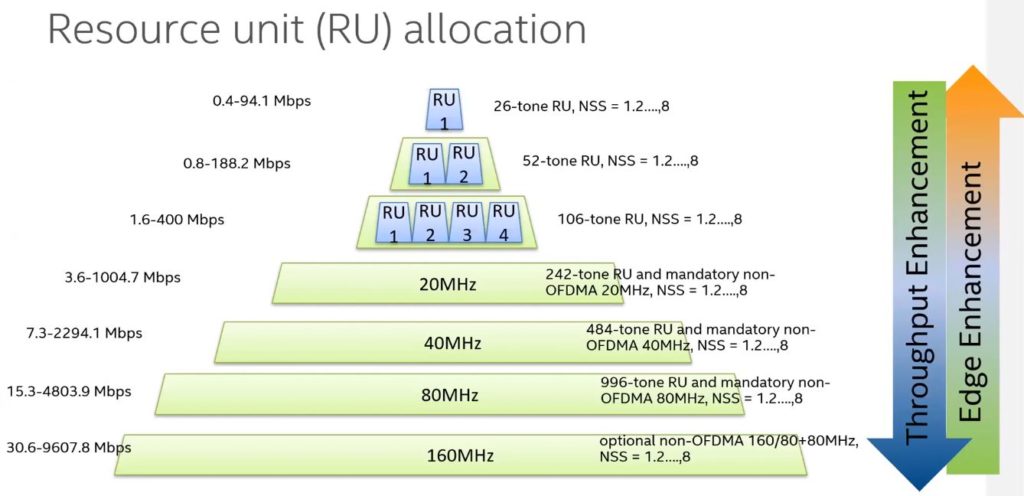

Cordiero showed experimental results where Wi-Fi 6 data rates can reach 9.6 Gb/sec, but practical speeds will be considerably lower, roughly 2 Gb/sec to 2.5 Gb/sec. Furthermore, speed comes with several prices. For example, although Wi-Fi 6 offers data channels up to 160 MHz, actual channel sizes will vary depending on the number of users. Figure 4 shows the tradeoff in throughput versus number of users. As Wi-Fi 6E products appear, early users will have the benefit of few others using the 6-GHz band. Thus, they will see higher throughput. That will change as the number of users grows. The 1200 MHz of spectrum will eventually fill as more devices come into use.

Figure 4. As more users come online and consume resource units, data rates for Wi-Fi can drop. Source: IEEE/IMS2021

Scheduling in Wi-Fi 6 takes place at the MAC layer where, as Cordiero noted, some access points can have priority over others. Latency improves by 75% over Wi-Fi 5.

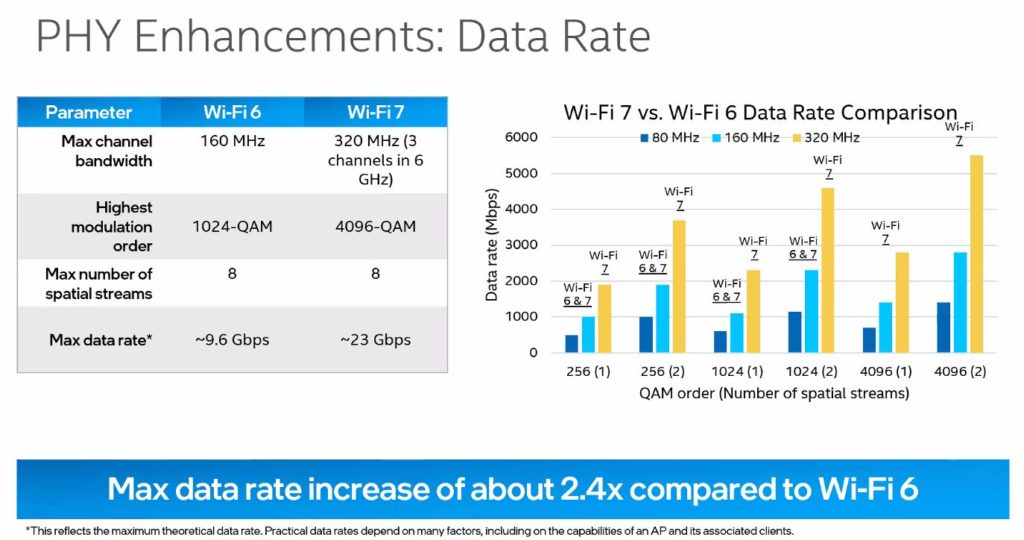

Cordiero also provided a peek into Wi-Fi 7, which likely will appear in 2024. Figure 5 provides the goals of Wi-Fi 7, which include yet higher data rates resulting from the move to 4096 QAM. We’re going to need considerably better signal processing and test equipment to reach that goal.

Figure 5. Wi-Fi 7 increase bandwidth and QAM modulation levels to increase data rate. Source: IEEE/IMS2021

Harish Viswanathany, Nokia Bell Labs, spoke of 5G’s current deficiencies and what we’ll need from 5G-Advanced and 6G (Figure 6).

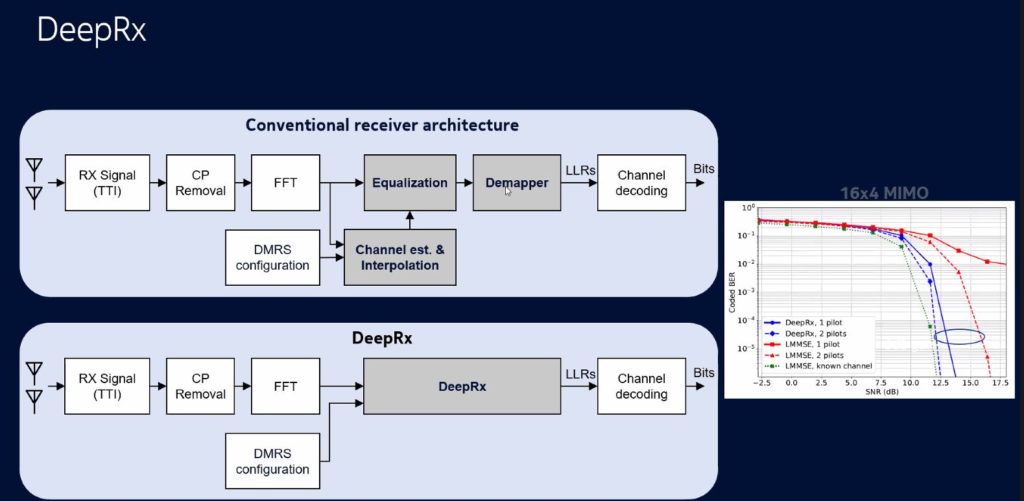

Echoing a theme that’s appeared at other 5G/6G conferences, Viswanathany referred to an AI designed and powered air interface. Figure 7 shows how several blocks in what he called the “Deep Rx” signal chain might result in fewer functional blocks. The theory being that AI/ML becomes part of the PHY. Thus, engineers wouldn’t design radios and digital PHY entirely based on specs, but let the system figure out the best PHY based on data of the radio channel. That leads to the questions of having enough channel data and enough computational power to do the job and how to comply with industry and regulatory technical standards.

Figure 7. AI/ML could figure into the air-interface design of 6G, making it adaptable to channel conditions. Source: IEEE/IMS2021

Commenting on sensing and radar, Viswanathany envisions radar-like digital frames used for sensing, particularly in autonomous vehicles. “We’ll need hundreds of gigabits per second,” he warned. “Sub-terahertz frequencies and OFDM likely won’t be good enough.”

Turning to the energy-consumption issue, Viswanathany asked “Will we use high-resolution or 1-bit analog-to-digital converters (ADCs)? Power will affect phase noise, which dominates at higher frequencies. We think that a 5-bit ADC and OFDM is a reasonable tradeoff.”

Regarding massive MIMO, Viswanathany sees four times as many antennas used at frequencies higher than those in use today, say above 100 GHz. He sees hybrid beamforming, which should be the best tradeoff between performance and energy consumption. Additionally, Viswanathany expects 6G radios with large bandwidth to simultaneously cover many RF frequency bands.

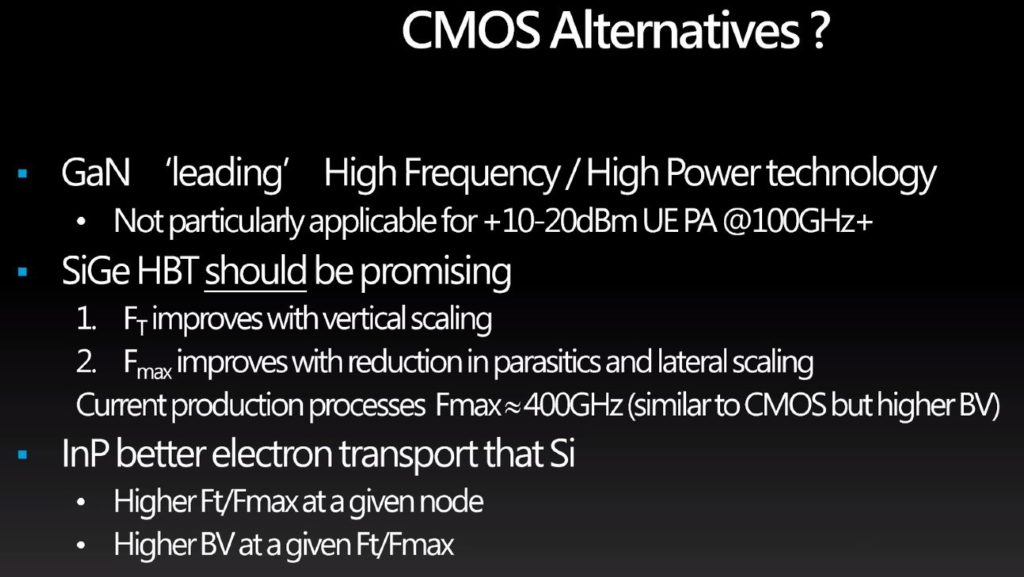

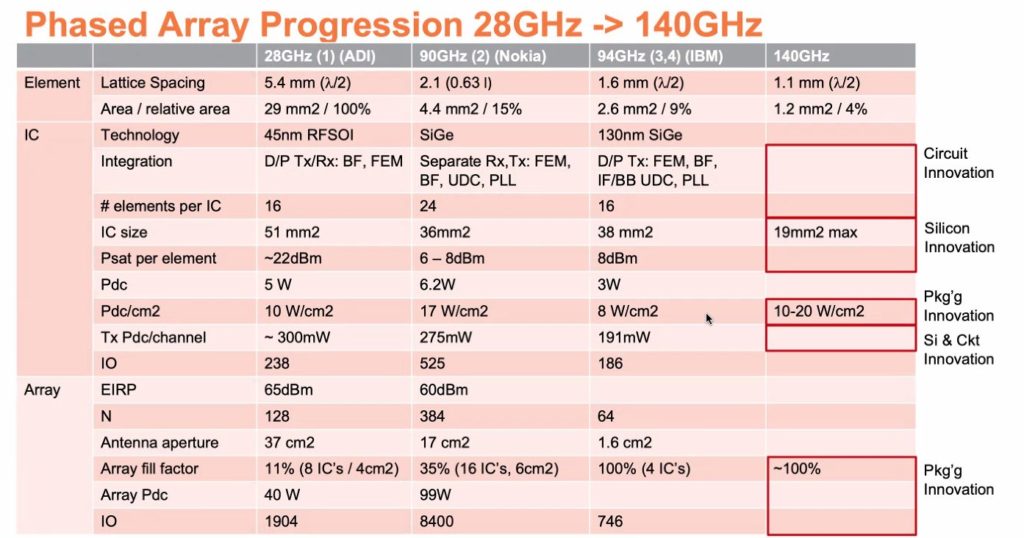

Jon Strange from Mediatek discussed how we’ll need innovation in materials for 6G. In particular, the D-Band (110 GHz to 170 GHz and the G-Band (275 GHz to 320 GHz). Power issues will gain importance at these sub-terahertz frequencies with the power amplifier (PA) being a major issue. MIMO configurations of 8×8 and 16×16 antenna arrays will be common in user devices given their small size. “It’s not a design issue, but one of the underlying technology.” He also noted that the technology for a 1 THz transistor isn’t available to foundries and thus CMOS will continue to dominate for some time to come.

According to Strange, CMOS isn’t practical above 250 GHz. While we wait for those new materials and higher-frequency transistors, Strange cited a PA survey from Georgia Tech, and he pointed to several enhancements to CMOS such as SOI, SiGe, and InP (Figure 8) that could keep things going. Don’t discount the need for something to replace copper wires as well.

Figure 8. Jon Strange of Mediatek highlighted enhancements to CMOS that we’ll need until new materials are developed for higher speeds. Source: IEEE/IMS

Strange said that scale is they key to it all. The development costs must be recovered by the millions of devices that will be sold.

Peter Gammel from Global Foundries continued the discussion on semiconductor materials for use in PAs and beamforming. “The industry will need a mix of materials,” he said. “GaN is pushing the metrics and SiGe is the highest performing, with 500 GHz or higher frequencies possible. Silicon-on-insulator (SOI) also shows promise. Physically stacking transistors allows for higher voltages. “We have to deliver both performance and price. The tradeoff is size versus power.” The table in Figure 9 compares different technologies and where innovation is needed.

Figure 10 shows the concept of transistor stacking on SOI.

Figure 10. Stacking of transistors on a PA produced higher voltages in output signals. Source: IEEE/IMS

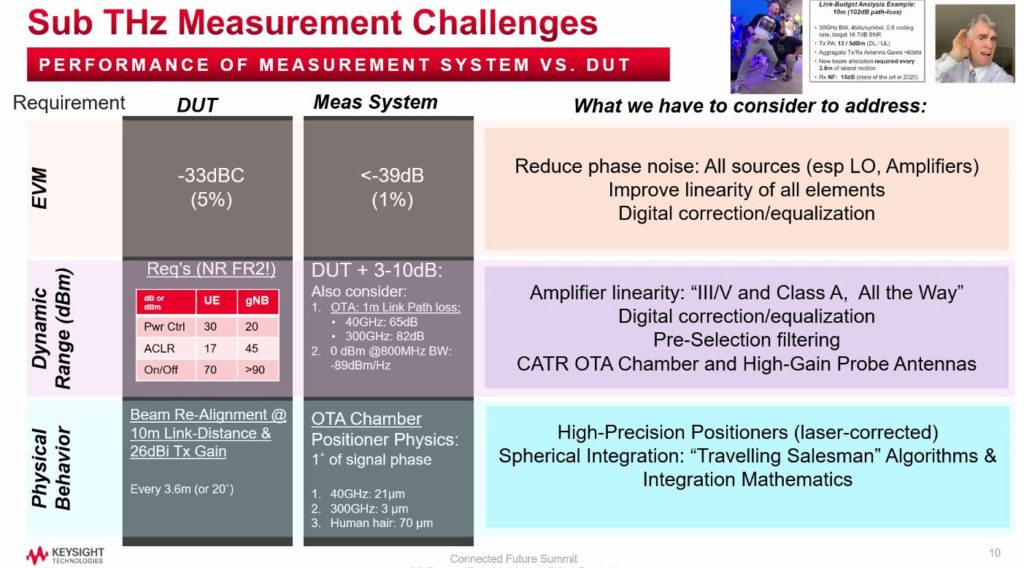

Keysight’s Roger Nichols shifted the conversation to test and measurement. Later, Kate Ramley of NIST continued that conversation with a presentation on metrology for wireless systems.

Nichols opened his talk with a take on the hologram in Star Wars. “To achieve holograms with sufficient resolution, we need data rates far beyond what we think of today.” To reach data rates of 100 Gb/sec at 10 m, signal beams must sharpen to compensate for degradation. That requires frequent beam alignment to maintain data rates. Channel bandwidth needs to reach 800 MHz. To achieve such data rates, measurement systems must improve.

Nichols claimed that we have not make a significant improvement in spectral efficiency (bits/Hertz) in twenty years. A week later on June 30, Capgemini announced that its machine learning-based RAN application boosts spectral efficiency by 15%.

In Figure 11, Nichols shows an EVM of -33 dBC that’s achievable with today’s test equipment. In the D-Band and G-Band, noise become more of a problem because of the wider channel bandwidths. The dynamic range in the table reflects what’s needed for 5G. The right column shows what today’s measurement equipment must achieve to meet the numbers on the left.

Figure 11. Keysight’s Roger Nichols explained what it will take to get test equipment for 6G. Source: IEEE/IMS

Because reaching 100 Gb/sec speeds requires massive MIMO and over-the-air (OTA) measurements, testing devices and antenna arrays gets difficult. You must consider distance, location, and motion of user devices when testing a base station. The number of antennas in an array can’t get too large because of practical issues such as weight and wind resistance.

Nichols also addressed the power issue at high frequencies where InP PAs at 300 GHz are less than 10% efficient. ADCs are another issue. Nichols noted that a state-of-the-art ADC running at 250 Gsamples/sec consumes about 250 W. “Carriers today,” said Nichols, “spend more money on power than they do on anything else.”

Kate Remley closed the day with a discussion of the measurement methods for wireless systems used at NIST. 5G devices and systems rely on OTA measurements, so engineers need methods to gain confidence in the signals being measured. Because 5G devices use antenna arrays, NIST has developed procedures to calibrate test signals and chambers. That requires reference waveforms to establish known quantities and see how a test chamber alters those signals. Remley noted how NIST engineers calculate parameters such as angle of arrival, delay, reflections, and so on, all with the goal of establishing a measurement uncertainly.

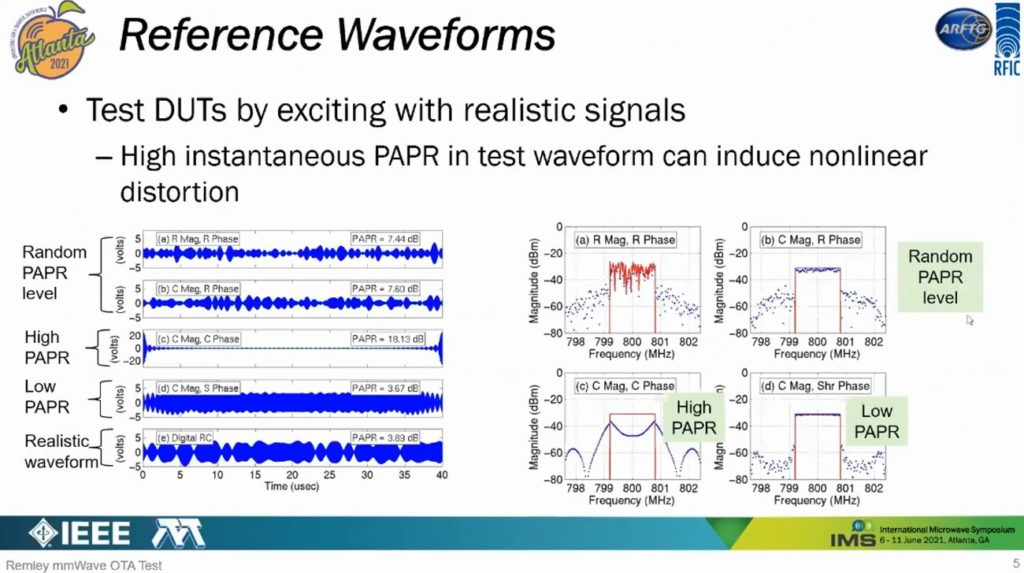

Reference waveforms have different characteristics. In Figure 12, you can see waveforms with random, high, and low peak-to-average power ratio (PAPR) plus a “realistic” waveform. Each waveform has the same average power, but PAPR differs because of the signal’s time-domain characteristics. Those changes on PAPR result in differences in adjacent-channel power ratio (ACPR).

Figure 12. Signals with known PAPR let NIST engineers characterize test chambers for wireless signals. Source: IEEE/IMS

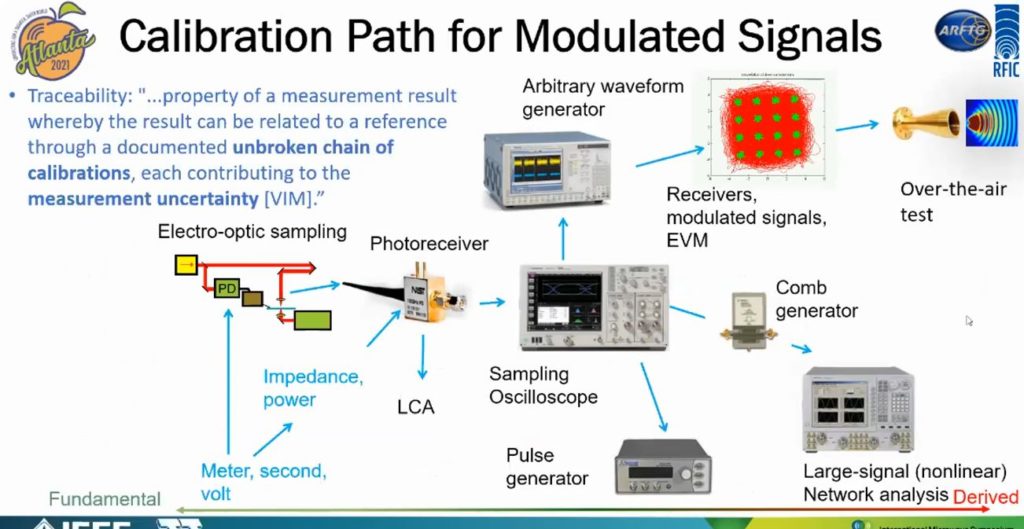

Capturing the signals requires timely measurements. That’s why NIST uses and arbitrary waveform generator to generate sync pulses that trigger test equipment such as oscilloscopes. Figure 13 shows the calibration path.

Figure 13. NIST engineers used several test instruments to develop an OTA measurement calibration procedure. Source: IEEE/IMS

Remley closed by saying “You don’t want to blame the DUT for something that’s part of the measurement setup or instrumentation.”