Be honest: The uncertainties of leading-edge engineering R&D are quite modest compared to what medical research faces, especially in areas such as epidemiology.

Like many of you, I have been trying to follow many of the numbers related to the Covid-19 virus as well as ongoing vaccine efforts – and I have given up. The main reason is frustration with the credibility of almost all associated numbers and resultant lack of confidence in conclusions drawn from them.

It’s not that the researchers are trying to “fudge the numbers” and thus their predictions (although I suspect some are doing so to get more attention, grants, or an ego boost). The simple fact is that needed numbers collected even by reputable sources are burdened with large amounts of uncertainty. It’s gotten to the point that I ignore almost any virus-related story which has the predictive words “may” or “could” or similar in it, as many of these are extrapolations based on very rickety foundations and numbers, and their extrapolations have a very wide but usually unpublicized error band.

There’s a problem inherent in these numbers, and that’s that they are, to be blunt, unreliable and prone to error. There are many misdiagnoses, for so many reasons. The cause of death, for example, may be the result of quick judgment, while other patient conditions are often incompletely or incorrectly known. Even for non-fatal cases, some individuals aren’t clear about their medical history, those who do/don’t take various drugs they should or shouldn’t be taking….it’s a long list of error sources to which you can add your own variances and tolerances. There are many data-quality variations depending on who is making the assessment, the country, the location within the country, how busy the doctors are, and countless other factors.

Long story short: I would not be surprised if many of the data sets used to assess and predict the nuances of the Covid-19 situation have an inherent error from at least ±20 percent to much more than that. Worse, if you extrapolate from data with such a wide error band, and the extrapolation factor is exponential rather than simply linear – as it often is in virus-like situations – you can be so far off in the end that you might as well throw darts at a board and get answers with comparable accuracy.

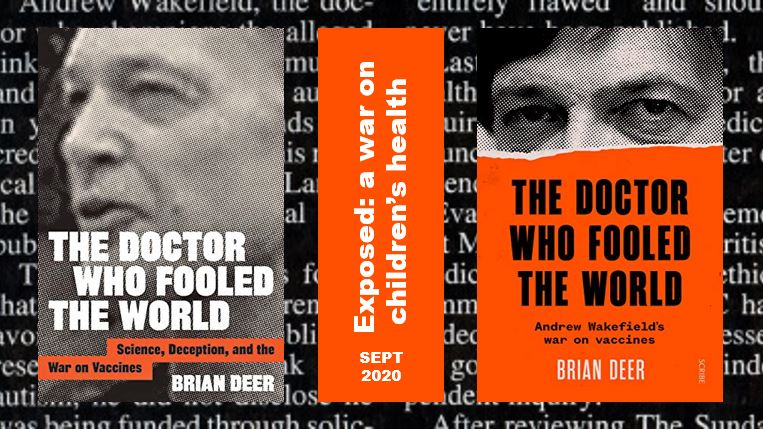

I gave some more thought to the problems medical researchers in general and epidemiologists in particular face when I read Michael Shermer’s excellent piece “‘The Doctor Who Fooled the World’ Review: Vax Populi” in The Wall Street Journal, reviewing the book “The Doctor Who Fooled the World” by Brian Deer (sorry, it may be behind a paywall). The book, Figure 1, details the outright fraud committed by former British doctor Andrew Wakefield as he claimed vaccines were causing autism and other serious problems in young children. (We’re not here to discuss pro- and anti-vaccine arguments, but the fundamental research-fraud charges against the doctor, who eventually lost his license, were proven beyond any doubt.)

The very readable review begins by assessing the fundamental problem of much “research”: that so little time and effort is devoted to replicating results. Yet, such replication is a keystone of scientific validation and confirmation. Shermer points out that many noted and apparently important studies fail to repeat their results and so probably never should have been published in the first place. Why this lack of replication effort? He says it is due to multiple factors:

- the pressure to “publish or perish” that leads researchers to cut corners;

- the “file-drawer” problem, where seemingly nonsignificant results go unreported;

- data dredging and what is called “p-hacking,”, where researchers manipulate statistical techniques to produce data sets that support their hypotheses;

- “Texas sharpshooting”, where the researcher draws a bull’s-eye around a random cluster of data and calls it a pattern;

- finally, there is the publication bias of journals that prefer original research over replication studies to boost their own prestige and get attention.

Whew… that’s quite a set of burdens to overcome, I realized.

Part 2 looks at the same scenario for engineering research, and the contrast.